WWDC17

-

Accessibility & Inclusion -

9:31

9:31

Designing for a Global Audience

The worldwide reach of the App Store means that your app can be enjoyed by people from around the globe. Explore ways to make your app useful and appealing to as many people as possible. And pick up simple techniques for avoiding common issues when reaching a global audience.

Accessibility & Inclusion English, Simplified Chinese -

13:57

13:57

Localization Best Practices on tvOS

Expand the reach of your apps by building them for a worldwide audience. Learn how to create localized tvOS apps that perform seamlessly regardless of country and language. Gain insights into such topics as handling server-side content, matching preferred languages, and localizing images and text...

Accessibility & Inclusion English, Simplified Chinese

-

-

App Services -

34:37

34:37

Developing Wireless CarPlay Systems

Wireless CarPlay is perfect for any trip. Get in your car without taking your iPhone out of your bag or pocket, and start experiencing CarPlay effortlessly. Learn how to design your CarPlay system to connect wirelessly to iPhone. Understand hardware requirements, best practices for a great user...

App Services English, Simplified Chinese -

8:37

8:37

Express Yourself!

iMessage Apps help people easily create and share content, play games, and collaborate with friends without needing to leave the conversation. Explore how you can design iMessage apps and sticker packs that are perfectly suited for a deeply social context.

App Services English, Simplified Chinese -

4:40

4:40

Extend Your App's Presence With Sharing

Help your users share the great content in your app by using the built-in iOS sharing functionality. Learn how timing, placement and context of sharing can drive engagement and acquire new users.

App Services English, Simplified Chinese

-

-

App Store Distribution & Marketing -

35:48

35:48

iOS Configuration and APIs for Kiosk and Assessment Apps

iOS provides several techniques for keeping your app front and center. Whether you're building a kiosk, hospitality check-in, or educational assessment app, choosing the right app-lock technique is critical. From Guided Access to Automatic Assessment Configuration you'll learn which approach...

App Store Distribution & Marketing English, Simplified Chinese

-

-

Audio & Video -

27:33

27:33

Enabling Your App for CarPlay

Understand how to enable your audio, messaging, VoIP calling or automaker app for CarPlay. Audio, messaging and VoIP calling apps use a consistent design that's optimized for use in the car. Automaker apps provide vehicle specific controls and displays to keep drivers connected without leaving...

Audio & Video English, Simplified Chinese -

18:41

18:41

Error Handling Best Practices for HTTP Live Streaming

HTTP Live Streaming (HLS) reliably delivers media content across a variety of network and bandwidth conditions. However, there are many factors that can impact stream delivery, such as server or encoder failures, caching issues, or network dropouts. Learn the best-practice behaviors that your...

Audio & Video English, Simplified Chinese -

9:07

9:07

HLS Authoring Update

HTTP Live Streaming (HLS) reliably delivers video to audiences around the world. Key to this reliability is a comprehensive set of tools to help you author, deliver, and validate the HLS streams you create. See what's new in these tools, learn the latest authoring recommendations, and how they...

Audio & Video English, Simplified Chinese -

14:41

14:41

Now Playing and Remote Commands on tvOS

Consistent and intuitive control of media playback is key to many apps on tvOS, and proper use and configuration of MPNowPlayingInfoCenter and MPRemoteCommandCenter are critical to delivering a great user experience. Dive deeper into these frameworks and learn how to ensure a seamless experience...

Audio & Video English, Simplified Chinese

-

-

Business & Education -

40:09

40:09

SceneKit in Swift Playgrounds

Discover tips and tricks gleaned by the Swift Playgrounds Content team for working more effectively with SceneKit on a visually rich app. Learn how to integrate animation, optimize rendering performance, design for accessibility, add visual polish, and understand strategies for creating an...

Business & Education English, Simplified Chinese

-

-

Design -

10:41

10:41

60-Second Prototyping

Learn how to quickly build interactive prototypes! See how you can test new ideas and improve upon existing ones with minimal time investment and using tools you are already familiar with.

Design English, Simplified Chinese -

10:31

10:31

App Icon Design

An app icon is the face of your app on the home screen. Learn key design principles for creating simple, unique, meaningful and beautiful app icons. Gain simple but effective techniques for testing your app icon for clarity and immediate recognizability.

Design English, Simplified Chinese -

9:52

9:52

Communication Between Designers and Engineers

Good communication between designers and engineers is the key to building great products. Discover how production and specification techniques can improve communication, build trust, and help design and development teams work together to build better apps.

Design English, Simplified Chinese -

13:53

13:53

Design Tips for Great Games

Great games transport us into another world where we can reign over a kingdom, fight epic battles, or become a pinball wizard. Learn on-boarding and UI design best practices that will enable everyone to lose themselves in your game and have fun.

Design English, Simplified Chinese -

11:22

11:22

Designing Glyphs

Glyphs are a powerful communication tool and a fundamental element of your app's design language. Learn about important considerations when conceptualizing glyphs and key design principles of crafting effective glyph sets for spaces inside and outside of your app.

Design English, Simplified Chinese -

34:48

34:48

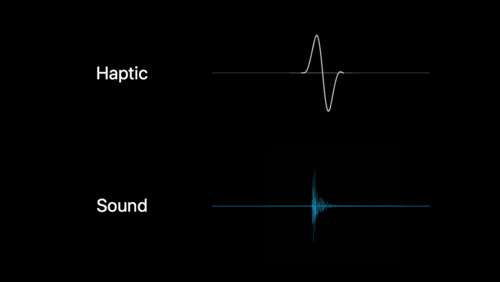

Designing Sound

Design is not just about what people see, it's also about what they hear. Learn about how sound design can help you create a more immersive, usable and meaningful user experience in your app or game, and get a glimpse of how the sounds in Apple products are created.

Design English, Simplified Chinese -

59:56

59:56

Essential Design Principles

Design principles are the key to understanding how design serves human needs for safety, meaning, achievement and beauty. Learn what these principles are and how they can help you design more welcoming, understandable, empowering and gratifying user experiences.

Design English, Simplified Chinese -

10:06

10:06

Get Started with Display P3

Wide color displays allow your app to display richer, more vibrant and lifelike colors than ever before. Get a primer on color management, the Display P3 color space, and practical workflow techniques for producing more colorful images and icons.

Design English, Simplified Chinese -

14:50

14:50

How to Pick a Custom Font

Choosing a custom font for your app can be a daunting task involving both functional and stylistic decisions. Gain a solid understanding of fundamental font design characteristics such as proportion and contrast. Learn how to apply this knowledge when deciding which font is right for your app.

Design English, Simplified Chinese -

10:55

10:55

Love at First Launch

Engage people from the first moment they open your app, and keep them coming back for more. Learn tips on how to make a compelling first impression, methods for teaching new users about your app, and best practices when asking users for more information.

Design English, Simplified Chinese -

10:09

10:09

Rich Notifications

Discover the keys to creating informative, useful and beautiful rich notifications in iOS. Get practical and detailed guidance about how to design short looks, long looks, and quick actions that will make your app's notifications something people look forward to receiving.

Design English, Simplified Chinese -

8:41

8:41

Size Classes and Core Components

Designing for multiple screen sizes can seem complicated, difficult, and time-consuming. Learn how size classes, dynamic type, and UIKit elements help your app to scale elegantly, save you time, and make your app look amazing on whatever device people are using.

Design English, Simplified Chinese -

11:09

11:09

Writing Great Alerts

Learn how to create clear, informative, and helpful alerts that will make your app easy and enjoyable to use. Get valuable insights about the proper role for alerts, actionable guidance about writing effective alerts, and techniques for avoiding common pitfalls.

Design English, Simplified Chinese

-

-

Developer Tools -

54:37

54:37

Modernizing Grand Central Dispatch Usage

macOS 10.13 and iOS 11 have reinvented how Grand Central Dispatch and the Darwin kernel collaborate, enabling your applications to run concurrent workloads more efficiently. Learn how to modernize your code to take advantage of these improvements and make optimal use of hardware resources.

Developer Tools English, Simplified Chinese -

53:52

53:52

SceneKit: What's New

SceneKit is a fast and fully featured high-level 3D graphics framework that enables your apps and games to create immersive scenes and effects. See the latest advances in camera control and effects for simulating real camera optics including bokeh and motion blur. Learn about surface subdivision...

Developer Tools English, Simplified Chinese

-

-

Graphics & Games -

32:17

32:17

Going Beyond 2D with SpriteKit

SpriteKit makes it easy to create high-performance, power-efficient 2D games and more. See how to take SpriteKit objects into Augmented Reality through seamless integration with ARKit. Learn about mixing 2D and 3D content and applying realistic transformations. Take direct control over SpriteKit...

Graphics & Games English, Simplified Chinese -

29:05

29:05

High Efficiency Image File Format

Learn the essential details of the new High Efficiency Image File Format (HEIF) and discover which capabilities are used by Apple platforms. Gain deep insights into the container structure, the types of media and metadata it can handle, and the many other advantages that this new standard affords.

Graphics & Games English, Simplified Chinese

-

-

Photos & Camera -

58:39

58:39

Capturing Depth in iPhone Photography

Portrait mode on iPhone 7 Plus showcases the power of depth in photography. In iOS 11, the depth data that drives this feature is now available to your apps. Learn how to use depth to open up new possibilities for creative imaging. Gain a broader understanding of high-level depth concepts and...

Photos & Camera English, Simplified Chinese

-

-

Privacy & Security -

17:34

17:34

Filtering Unwanted Messages with Identity Lookup

Unwanted SMS and MMS messages are a persistent, frustrating nuisance. Identity Lookup is a new framework that allows you to participate in the process of filtering incoming messages. Get the details of how to identify and prevent these unsolicited messages. Understand the options for on-device...

Privacy & Security English, Simplified Chinese

-

-

SwiftUI & UI Frameworks -

7:18

7:18

Deep Linking on tvOS

Design features such as the tvOS Top Shelf and Universal Links help customers immerse themselves in your content more quickly and easily. Learn how to create seamless app launch experiences when deep linking into content of UIKit or TVMLKit apps.

SwiftUI & UI Frameworks English, Simplified Chinese -

3:46

3:46

Extend Your App’s Presence with Deep Linking

Learn about deep linking and how universal links can be used to make your app's content and functionality accessible throughout iOS.

SwiftUI & UI Frameworks English, Simplified Chinese -

8:47

8:47

What’s New in iOS 11

See how the updates to UIKit controls and text styles in iOS 11 can help you design an app with a stronger visual hierarchy, clearer navigation, and a simpler interface that's easier to use.

SwiftUI & UI Frameworks English, Simplified Chinese

-

-

System Services -

11:15

11:15

Introducing Core NFC

Core NFC is an exciting new framework that enables you to read NFC tags in your apps on iPhone 7 and iPhone 7 Plus. Learn how to integrate Core NFC into your apps, key requirements for using this feature, and start thinking about the new kinds of apps that are enabled with NFC capabilities.

System Services English, Simplified Chinese

-