Thank you for the response. So I took out that code here

func session(_ session: ARSession, didAdd anchors: [ARAnchor]) {

print(anchors)

}

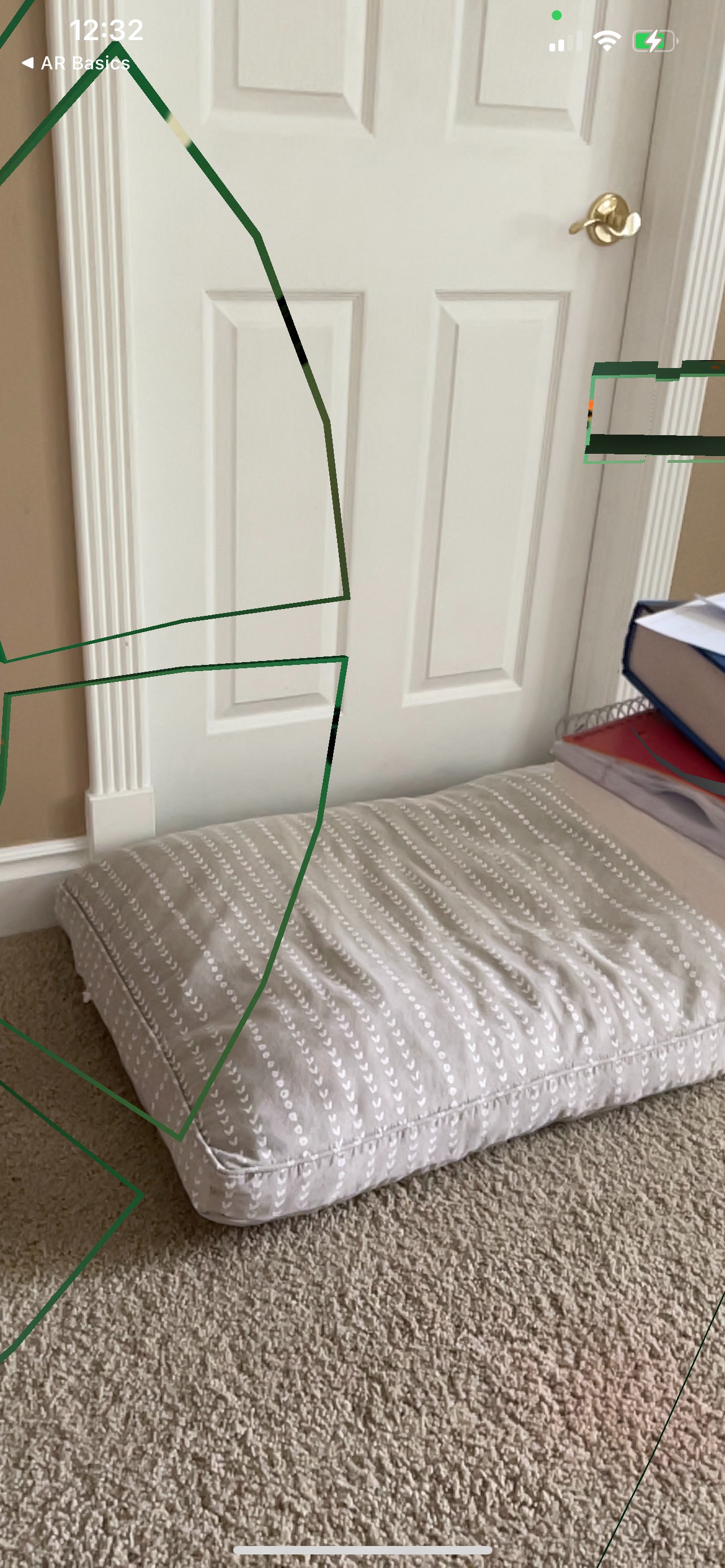

and when it detects this dog bed as a plane it renders my model but I'm inside R2. hahah. So on the apple example for arkit onTap I did the appropriate translations and scales as my model was a lot bigger than the little cube that gets rendered on the example code. So I was trying to do the same with the plane anchor in the above function. However, it seems it is not rendering it on the plane quite right.

@objc

func handleTap(gestureRecognize: UITapGestureRecognizer) {

// Create anchor using the camera's current position

if let currentFrame = session.currentFrame {

// Create a transform with a translation of 0.2 meters in front of the camera

var translation = matrix_identity_float4x4

var scale = matrix_identity_float4x4

translation.columns.3.z = -2

scale.columns.0.x = 0.1

scale.columns.1.y = 0.1

scale.columns.2.z = 0.1

let rotationY = float4x4().rotateYAxis(by: 180)

let rotationZ = float4x4().rotateZAxis(by: 270)

let rotation = rotationY * rotationZ

let tranformation = translation * scale * rotation

let transform = simd_mul(currentFrame.camera.transform, tranformation)

// Add a new anchor to the session

let anchor = ARAnchor(transform: transform)

session.add(anchor: anchor)

}

}

I also noticed in the template code that there is the part that updates the anchors and it updates the coordinate transform. Do I need to maybe apply translation and scale here?

func updateAnchors(frame: ARFrame) {

// Update the anchor uniform buffer with transforms of the current frame's anchors

anchorInstanceCount = min(frame.anchors.count, kMaxAnchorInstanceCount)

var anchorOffset: Int = 0

if anchorInstanceCount == kMaxAnchorInstanceCount {

anchorOffset = max(frame.anchors.count - kMaxAnchorInstanceCount, 0)

}

for index in 0..<anchorInstanceCount {

let anchor = frame.anchors[index + anchorOffset]

// Flip Z axis to convert geometry from right handed to left handed

var coordinateSpaceTransform = matrix_identity_float4x4

coordinateSpaceTransform.columns.2.z = -1.0

let modelMatrix = simd_mul(anchor.transform, coordinateSpaceTransform)

let anchorUniforms = anchorUniformBufferAddress.assumingMemoryBound(to: InstanceUniforms.self).advanced(by: index)

anchorUniforms.pointee.modelMatrix = modelMatrix

}

}

Here is what it looks like when it detects the dog bed plane and then renders R2.