-

Bring your machine learning and AI models to Apple silicon

Learn how to optimize your machine learning and AI models to leverage the power of Apple silicon. Review model conversion workflows to prepare your models for on-device deployment. Understand model compression techniques that are compatible with Apple silicon, and at what stages in your model deployment workflow you can apply them. We'll also explore the tradeoffs between storage size, latency, power usage and accuracy.

Chapters

- 0:00 - Introduction

- 2:47 - Model compression

- 13:35 - Stateful model

- 17:08 - Transformer optimization

- 26:24 - Multifunction model

Resources

Related Videos

WWDC24

- Deploy machine learning and AI models on-device with Core ML

- Explore machine learning on Apple platforms

- Support real-time ML inference on the CPU

WWDC23

- Improve Core ML integration with async prediction

- Use Core ML Tools for machine learning model compression

WWDC22

WWDC21

-

Search this video…

Hi, my name is Qiqi Xiao. I’m an engineer on the Core ML team. Today I am going to talk about several exciting updates that have been made to Core ML Tools. These updates will help you better bring your machine learning and AI models to Apple Silicon. There are three important phases in a model deployment workflow. I will be focusing on the preparation step and sharing a number of optimizations and make sure that features are included to run your model most efficiently on device. For this session, I assume that you already have a machine-learning model. This model could be pre-trained, fine-tuned, or trained from scratch. If you want to learn more about the latter, I recommend looking at this year’s “Train your machine learning and AI models on Apple GPUs” video. I will not be covering the code you will write to integrate your model within your app. I recommend you check out the videos below to learn more about integration. Now, let’s jump in.

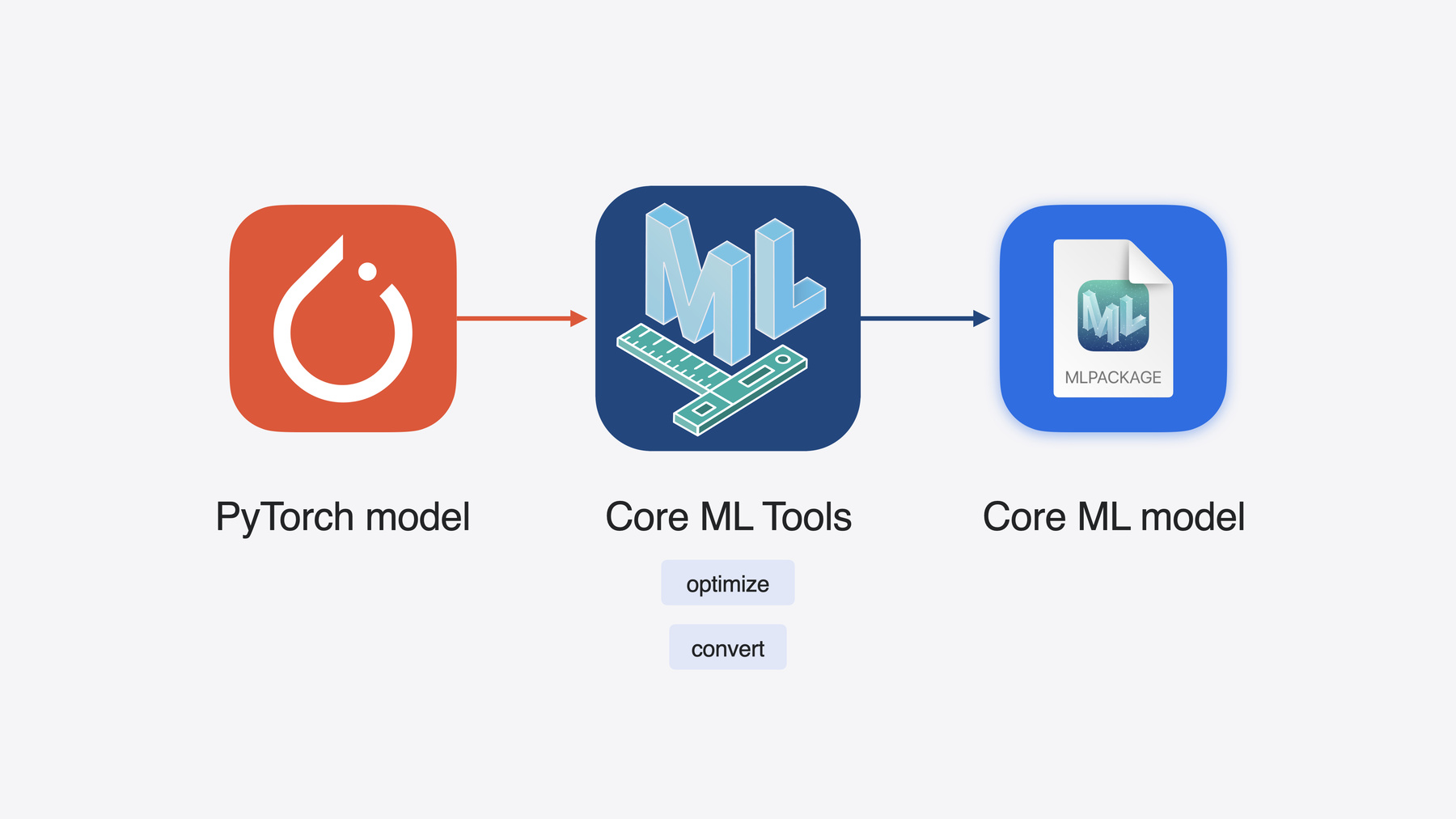

Core ML Tools is an open-source Python package containing utilities to optimize and convert your models for use with Apple frameworks. It lets you take your PyTorch models and transform them into a Core ML format, which is optimized for execution on Apple Silicon. Apple Silicon’s unified memory, CPU, GPU and Neural Engine provide low latency and efficient compute for machine learning workloads on device. By default, simply converting your model into the Core ML format and using Apple’s frameworks for inference allows your app to leverage the power of Apple Silicon. While Apple Silicon powers all of our platforms, each has its own unique characteristics and strengths. You will want to consider the combination of storage, memory and compute available on the platform and devices you are targeting. These attributes need to be aligned with the model size, accuracy and latency requirements for your use case. Part of model preparation is exploring your options and applying various optimizations to find the best alignment. Let's explore how to do that together. I’ll start with model compression. In this section, you will learn how to use different compression techniques and workflows. After that, I’ll hand it over to Junpei who will tell you more about stateful model support. He will also talk about optimization for transformer architecture. Finally, multiple function model support will be introduced. Now let’s get started with model compression. Machine learning models that perform well are much bigger today. It needs more storage space, higher latency and more power, making it more difficult to deploy on device efficiently. That leaves us with one option, that is to reduce the model size with model compression.

Lets first recap the weight compression techniques introduced last year. The first technique is called palettization. In palettization, weights with similar values are clustered together and represented using the value of the cluster centroids. The cluster centroids are stored in a lookup table. An N-bit index-map to the lookup table is the compressed weight. In this example, the weights are compressed to 2-bits. The second technique is called quantization. To perform quantization, you take the Float weight values and linearly map them into the integer range. The integer weights are stored, along with a pair of scale and bias, known as quantization parameters, which later can be used to map the integers back to Float.

The third technique is pruning. Pruning helps you efficiently pack your model weights with sparse representation. Starting with a weight matrix, I set the smallest values to 0. Now, I only need to store the bit mask and the non-zero values. The existing techniques have been working well on lots of models. Now lets apply some of these techniques to a large model and look at how we have extended them for further compression.

Here is an example of stable diffusion models. It takes a natural language description known as a prompt, and produces an image matching that description. After I convert it, I find the model size of the biggest model is above 5 GB in Float16 precision. This is initially too big to be running on iPhones or iPads. In order to deploy this model, we need to compress it. Let’s try palettization, which has a flexibility to choose the number of bits to achieve different compression ratios. For example, applying 8-bit palettization can reduce the model size to be about half of the Float16 model. I can get a wonderful image with my prompt “Cat in a tuxedo, oil on canvas” on my mac. However, I want to get this model below 2 GB before considering iPhone and iOS integration. 6-bits can meet this requirement and I can finally run this model on my iPad. What about 4-bits? I am not able to get a good image anymore, meaning the model isn’t accurate. So what’s going on here? To understand it better, we need to first revisit the palettization representation. iOS 17 only supported per-tensor palettization, where all the values are clustered into a single lookup table. When 4-bit palettization is used, there are only 16 cluster centroids to map back to the whole tensor. It has low granularity, and thus introduces more potential errors for large matrices. Now let’s think about how to increase the granularity.

Taking a step back, I would like to explain a few terms that are commonly used in the field of machine learning. Weights can be represented as a matrix, like here I use the matrix rows to represent output channels and columns to represent input channels. We can either apply compression to the whole tensor, or, to each group of channels, or to each channel individually. We can even split each row to have smaller blocks, applying compression at a sub-channel level.

From left to right in the diagram, the granularity gradually gets higher. Back to palettization, while in iOS 17, we could assign a single look up table to the full weight tensor, in iOS 18, we now can store more than one lookup table, one for each group of channels. With this, much better accuracy can be achieved.

For linear quantization, whereas iOS 17 allowed for per-channel scale and bias, with iOS 18, these quantization parameters can be provided at the per-block level.

Even for the pruning technique, we now can compress it further. Earlier, the dense weight got sparsified to non-zero values in Float precision. Now the float non-zero values can be further compressed using palettization or quantization, meaning your model can be even smaller. The characteristics of two different compression techniques can be utilized at the same time. Sparse palettization and sparse quantization become possible now.

From the three extensions discussed earlier, lets take palettization as an example on how we can apply it with some Python code. To start, create an OpPalettizerConfig object, describing how you want to compress the model. Here, I am doing 4-bit, using "kmeans" mode for clustering. I want to assign 1 look up table to a group of 16 channels, so I set the granularity to "per_grouped_channel" and set the group_size to 16. Once the config is defined, you can put it into the OptimizationConfig and use palettize_weights to compress the model. As we can see, it’s a simple process.

Now let’s see how this model performs. With group_size 16, you can see another cat matching my prompt. This generated image is much better than before, meaning that I can reclaim most of the accuracy back, with a very slight size increase from 1.29 to 1.3 GB. This increase is due to the little bit of extra space taken by the additional lookup tables.

To summarize, in iOS 18: per grouped channel palettization increases the granularity by having multiple lookup tables. It shines on Apple Silicon with neural engine. In addition to per block quantization, we have also extended support from 8-bit to include 4-bit quantization. This mode is especially optimized for GPUs on Macs. Finally, now you can combine sparsity with the other compression modes, which works well on neural engine. Before I conclude on model compression, I want to discuss the compression workflows, which is another dimension that can help you get smaller models. So far, the workflow we have been using for compression is a post training approach. It started with a pre-trained Core ML model, which gets compressed without any data in a very convenient way. However, this data-free workflow may not yield the best tradeoff between accuracy and the amount of compression. At higher compression ratios, we lose accuracy more rapidly. One way to get around this is to use training time compression. You can fine-tune your PyTorch model with training data while compressing the weights. You can then convert it to Core ML format. While this will allow you to get a more accurate model, this process could be time consuming and data hungry for a large model. Now we have a new workflow for you: that is post training compression with calibration data.

It offers a tradeoff that is in between the data free and fine-tuning approaches. In this case, a limited amount of data is needed to calibrate the model compression. Fine-tuning is not required at all, making it easier to set up and take less time. With new APIs introduced in coremltools.optimize this year, you can very easily perform this workflow. Lets understand this with an example.

Here I am taking pruning as an example. It can be started by importing the necessary modules. You can specify the prune_config now. Here I am setting the target_sparsity to 0.4, meaning 40 percent of weight values would be pruned away. I set the number of samples to be 128. Then I create the pruner with LayerwiseCompressor taking your PyTorch model and the defined config. Now I take care of the calibration_data_loader, which you can define your own for your model. Then you can call pruner.compress with the calibration_data_loader to calibrate a sparse model.

You can convert your sparse model to Core ML format now, or as sparse palettization is possible, as we discussed, you can do palettization after pruning to have further compression. To pass the sparsity metadata information, we continue with the sparse model we obtained previously. Again, you import the necessary modules, then config it for palettization, here I also set 4-bit granularity to "per_grouped_channel" and group_size to 16. You can now create your PostTrainingPalettizer object, and get the sparse_palettized_model with palettizer.compress. This PyTorch model can also be converted into Core ML format seamlessly.

After applying 40% sparsity, the stable diffusion model size gets further reduced to 1.1 GB. With data free post training compression, the model can only give some noise output, while with data calibration workflow, the model can also generate another cat image. Calibration based workflow works well and I like this new image. To summarize, in Core ML Tools 8, you can try the new compression representations. You can also use the new compression workflow that uses calibration data. There are many more new features this year, which we did not get to cover in this video. Please checkout our documentation to learn more. Happy compressing. Now, let’s hand it over to Junpei to talk about stateful models and other optimizations. Thanks Qiqi. Hi, I’m Junpei Zhou, and I’m an engineer on the Core ML team. I’ll be talking about stateful models, optimizations for transformer models, and multi-function support, to help you prepare machine learning models on Apple Silicon. Let’s kick off with stateful model support. For any machine learning model, we usually just have inputs and outputs. The input is processed by operations in the model to produce the output. Usually the dataflow inside the model is only for a specific inference run. However, sometimes we may need something that has a longer lifespan, so it can store information across different runs. In this case, a state is usually used. The model can read data from the state and write the result back to the state. The information in the state is persistent and can be used across different runs.

Here is an example of an accumulator, which keeps track of the summation of all historical inputs. The state is initialized to zero. The model reads the value from the state and the input, to produce the output. Meanwhile it also writes the result back to the state.

Similarly, when another input comes, it reads from the state, produces the output, while also updating the state.

Before Core ML supported states, one way to handle states in a model was to explicitly define additional inputs and outputs. For the accumulator example, we need input and output fields for the accumulator, then we explicitly copy over the accumulator output to the next accumulator input. This may be inefficient, especially for cases when the states are large.

This year, Core ML is introducing support for stateful models. Now the model takes care of updating the state tensors automatically so you don’t need to define them as inputs or outputs. Instead, you can update states in-place, which leads to better performance.

For the accumulator example, here is how I will define the model in PyTorch. I will use the register_buffer API in torch to denote the state tensors for my model.

Once that is done, converting a stateful torch model to a stateful Core ML model is also straightforward. I just need to specify states by using the newly introduced ct.StateType, where I provide the same state name as I used in the register buffer API. I then pass the states to ct.convert to convert stateful models.

The converted Core ML model will now be stateful. In the torch model, the state’s read and write is usually done by in-place ops and slice update ops. During conversion, Core ML Tools will generate corresponding ops in the converted Core ML model. If you are interested in learning more about deploying the stateful Core ML model on device, please checkout this video.

Now let’s talk about transformer optimizations. Transformers are one of the most popular model architectures in foundation models. They are built with several multi-head attention blocks, which are computationally intensive and usually the bottleneck during model inference.

Attention block is composed of several MatMul and Softmax ops, and 3 large tensors which are referred to as Query, Key and Value. For generating a token, the Query vector of the current word will attend to Key vectors of all previous words in the sequence, and then fuse the Value vectors based on the attention score. That is, at each time stamp, the Key and Value vectors for previous tokens are already computed in previous steps.

To avoid this calculation duplication, we can use a Key-Value cache, or KV-cache for short, to store the Key and Value vector calculated at each step, so they can be used in future steps.

KV-cache is a popular and effective technique in large language models to make decoding faster. It’s the perfect case to use a stateful model that we introduced before.

Without states, the KV-cache can only be used with I/O, which is inefficient considering the KV-cache usually has large tensors. With states, it can be updated in-place more efficiently. This stateful KV-cache is useful for preparing your transformer models on Apple Silicon.

Let’s go back to the attention block structure. We introduced how stateful KV-cache improves the Key and Value preparation efficiency for the attention. A natural follow-up is: can we make the attention calculation more efficient? This brings another optimization for the transformer models: fused representation for scaled dot product attention computation (also known as SDPA).

Previously, when you converted a torch model with the SDPA op, Core ML Tools broke the SDPA op into several smaller ops, including matmul, softmax, and other ops.

This year, with a minimum deployment target set to iOS 18, Core ML Tools will use an SDPA op in the converted Core ML model. This SDPA op takes inputs all in at once and calculates the attention more efficiently. It really shines on Apple Silicon GPUs. If you want to learn more about SDPA op optimization with Metal, I highly recommend checking out this video.

After introducing these exciting features, let’s walk through a demo about preparing the Mistral-7B model for Apple Silicon using Core ML Tools. Mistral-7B is a powerful large language model with 7.3 billion parameters. With the stateful KV-cache and SDPA op introduced this year, we can convert it to a Core ML model that is ready to run smoothly on Apple Silicon.

Let's use this Jupyter Notebook to show how to convert Mistral-7B model to a stateful Core ML model with post training per-block quantization.

Let me import some utils to make code cleaner and easier to follow.

The first step is to make the original Mistral 7B model stateful.

Starting from the original Mistral-7B model, we need to modify the model to make the model stateful to handle KV-cache efficiently.

We can register buffers for the KV-cache. I call them "keyCache" and "valueCache". There are other modifications in the model such as doing in-place KV-cache update during forward pass. You could find details in the utils later.

In the forward function, as we already have the registered buffers for KV-cache, we no longer need to have them as the inputs here. With all those changes, we get a stateful Mistral-7B model, which has KV-cache as persistent states.

Now we can initialize this model with weights auto downloaded from HuggingFace, and set the model to eval mode.

By specifying example inputs, here for the Mistral-7B model we need input_ids and causal_mask, we can then trace the model.

To convert this model, we specify inputs and outputs as usual. Here we use ct.RangeDim for the inputs shape, because the prompt length could be different across different runs.

To make sure the converted Core ML model also has states for KV-cache, we need to specify states by using this year's newly introduced ct.StateType. Here we use the name "keyCache" and "valueCache" to match the name when we registered buffers in the torch model.

Finally, we call ct.convert with inputs, outputs, and states, and set minimum_deployment_target to iOS 18. With this states passed to ct.convert, the converted Core ML model is a stateful model with k-cache and v-cache that could be updated in-place. After conversion, let's save the model to the disk to check the model size.

The conversion is finished. Let's check the model disk size.

It's 13 GB, which is a pretty large model.

Let's test the model by running model prediction, which uses Core ML Framework under the hood. Here is a prompt to let the model recommend a place to visit in Seattle in June.

As we can see, even though model is quite large, the converted stateful Core ML model could run smoothly on my mac.

This Float16 model is a little bit too large. Let's use some of the model compression techniques Qiqi mentioned before.

We can use the ct.optimize module to specify the compression config. In the OpLinearQuantizerConfig, we specify dtype to int4, granularity to "per_block", and block_size to 32. And we set it to global_config because we want to compress all weights in the model. You can specify config by operation type or operation name if you want.

Then we can use the linear_quantize_weights tool from ct.optimize module to quantize the model according to the config. Based on the config we specified, it's "int4" "per_block" quantization where each block has 32 elements. In this tool, Core ML Tools will go through all weights in the model, and compress them by the linear quantization algorithm.

The quantization finished. Let's check the quantized model size.

Nice! The disk size of the quantized model is much smaller than the original Float16 model, reduced from 13 GB to less than 4 GB.

Let's use the same prompt to run this quantized model.

Awesome! This much smaller model can run even faster than the original Float16 model, with similar quality of generated outputs. As we showed in this demo, preparing the Mistral-7B model with stateful KV-cache and quantization is straightforward. You can easily follow a similar workflow to bring your favorite language models to Apple Silicon.

Finally, I want to talk about one more feature, which is the support for multi function Core ML models.

Lets consider a model which is composed of multiple functions, a combination of which needs to be run together for the prediction. For example, you may have a model that has a feature extractor, followed by a classifier layer to produce a classification output. Also, there is a regressor layer to produce the regression output. These two can be combined together into a single model, which can be used for both tasks.

Here is how this would look in PyTorch. A classifier and a regressor share the same feature extractor, and each of them have been converted to a Core ML model. This year, we are introducing the support of multi-function models, where you can use Core ML Tools to merge those two models. During merging, Core ML Tools will deduplicate shared weights as much as possible, by calculating the hash values of weights. The merged multi-function model can do both tasks with a shared feature extractor.

Here is a sample code snippet for using Core ML Tools to merge Core ML models. After converting two models by ct.convert and saving them to individual mlpackages, you can create a MultiFunctionDescriptor to specify what models to merge and the new function_name in the merged model. Then you can use the save_multifunction util to produce a merged multi-function Core ML model.

When loading the multi-function model via Core ML Tools Python API, you can specify the function_name to load the specific function and then do the prediction as usual.

The multi-function model is extremely useful in large models. When fine-tuning large models, it’s usually too expensive to fine-tune the whole model. Instead, a more common practice is to use adapters during fine-tuning. The adapters are typically small with fewer parameters, but demonstrate comparable performance to a fully fine-tuned model.

For the same base model, there could be different adapters for different fields and downstream tasks. The adapter blocks typically are not just at the beginning or end of the model, they can be interacting with intermediate layers.

You can use Core ML’s new multifunction models to capture multiple adapters in a single model. A separate function would be defined for each adapter. The weights for the base model are shared as much as possible.

Check out the “Deploy machine learning and AI models on-device with Core ML” video to learn more about integrating multiple functions into your app. You will see a text-to-image example where a single model with multiple adapters are used to generate images with different styles.

To summarize, you can use new model compression techniques and workflows to greatly reduce the size of the model while still achieving good accuracy. It's also straightforward to have models use states and provide more than one function.

Bringing large transformer models to Apple Silicon has never been easier. For more detailed information, please checkout the Core ML Tools documentation. Thank you.

-