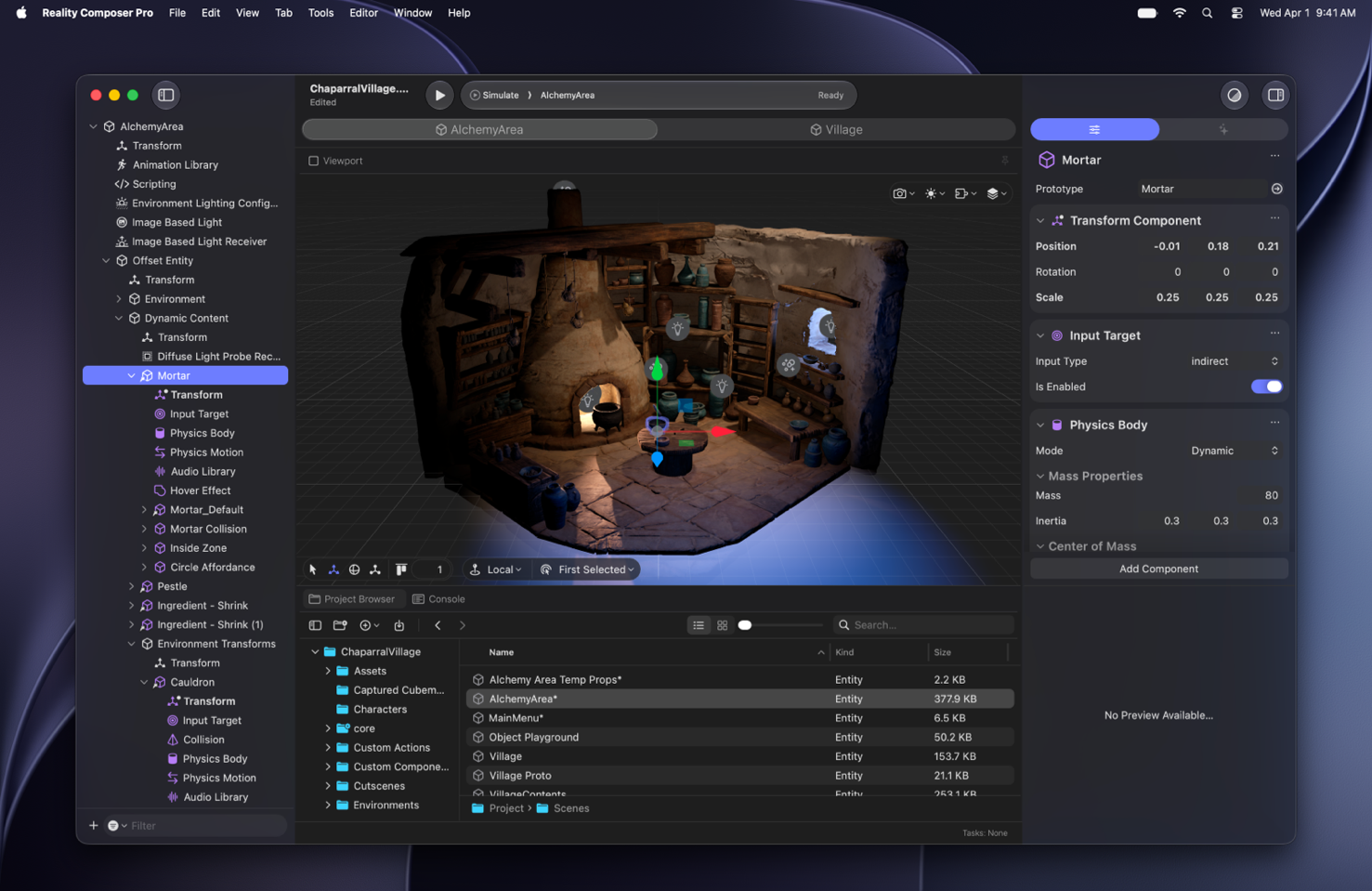

Reality Composer Pro

Reality Composer Pro 3 makes it easy to rapidly iterate, preview, and prepare 3D content for your visionOS apps, iOS apps, and more — right on your Mac. Build stunning scenes and animate characters with cinematic precision. And you can preview your changes live on Apple Vision Pro.

Get to know Reality Composer Pro

With deep Xcode integration, powerful visual scripting, generative intelligence to help with asset creation, and workflows tailored for designers, artists, and engineers alike, Reality Composer Pro closes the gap between idea and experience. Change a material, tweak an animation, adjust a layout — and see the result on device in seconds, not minutes.

Download the beta

Reality Composer Pro 3 beta requires macOS 26.5 or later. After installing Reality Composer Pro on your Mac, you can also download Reality Composer Pro Preview on your Apple Vision Pro, to preview your changes live. To download, sign in with your Apple Account. If prompted, complete the free registration and accept the Apple Developer Agreement.