-

What’s new in AVFoundation

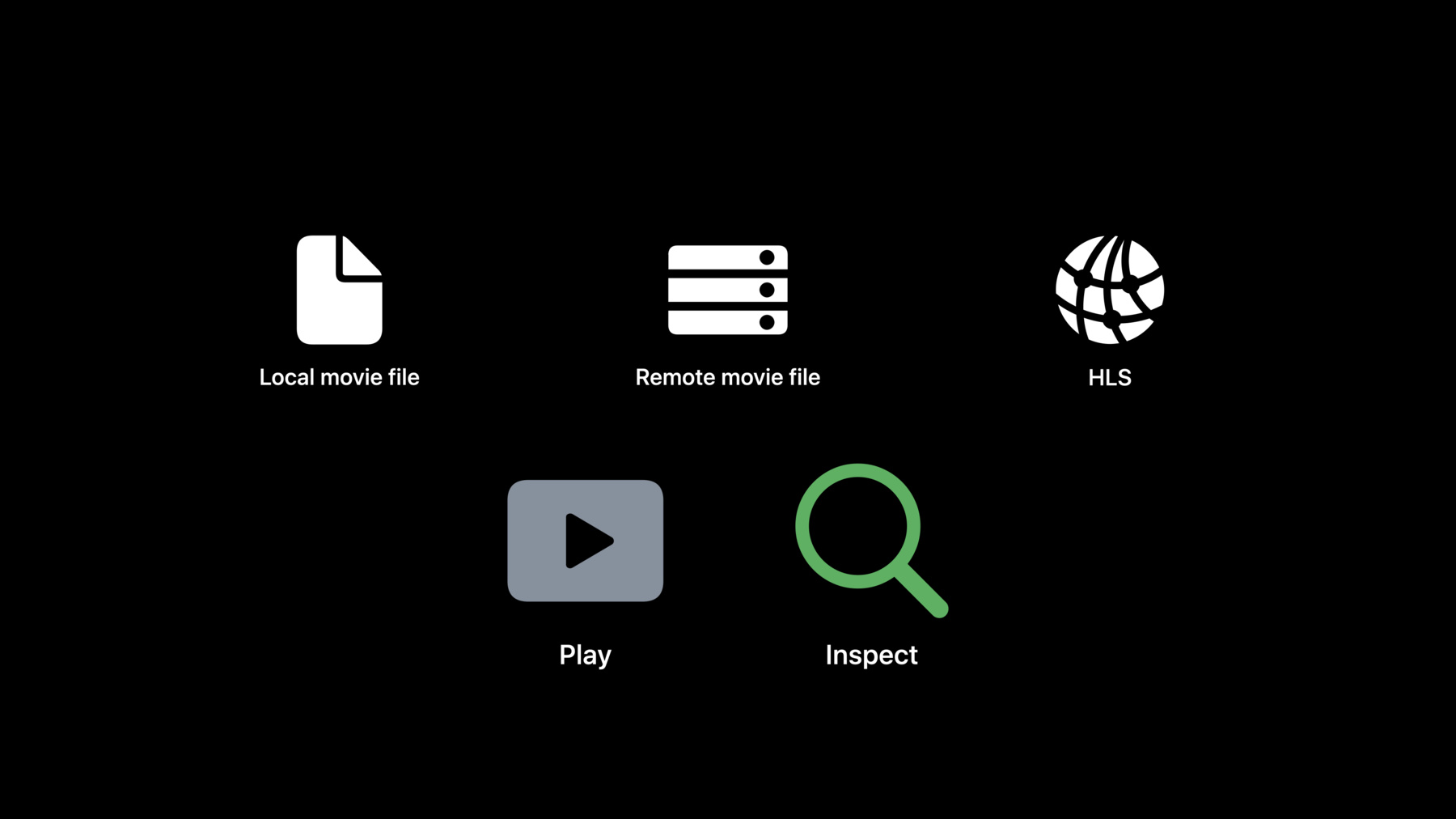

Discover the latest updates to AVFoundation, Apple's framework for inspecting, playing, and authoring audiovisual presentations. We'll explore how you can use AVFoundation to query attributes of audiovisual assets, further customize your custom video compositions with timed metadata, and author caption files.

Recursos

Vídeos relacionados

WWDC22

WWDC21

-

Buscar neste vídeo...

-

-

2:16 - AVAsset property loading

func inspectAsset() async throws { let asset = AVAsset(url: movieURL) let duration = try await asset.load(.duration) myFunction(thatUses: duration) } -

4:02 - Load multiple properties

func inspectAsset() async throws { let asset = AVAsset(url: movieURL) let (duration, tracks) = try await asset.load(.duration, .tracks) myFunction(thatUses: duration, and: tracks) } -

4:52 - Check status

switch asset.status(of: .duration) { case .notYetLoaded: // This is the initial state after creating an asset. case .loading: // This means the asset is actively doing work. case .loaded(let duration): // Use the asset's property value. case .failed(let error): // Handle the error. } -

6:32 - Async filtering methods

let asset: AVAsset let trk1 = try await asset.loadTrack(withTrackID: 1) let atrs = try await asset.loadTracks(withMediaType: .audio) let ltrs = try await asset.loadTracks(withMediaCharacteristic: .legible) let qtmd = try await asset.loadMetadata(for: .quickTimeMetadata) let chcl = try await asset.loadChapterMetadataGroups(withTitleLocale: .current) let chpl = try await asset.loadChapterMetadataGroups(bestMatchingPreferredLanguages: ["en-US"]) let amsg = try await asset.loadMediaSelectionGroup(for: .audible) let track: AVAssetTrack let seg0 = try await track.loadSegment(forTrackTime: .zero) let spts = try await track.loadSamplePresentationTime(forTrackTime: .zero) let ismd = try await track.loadMetadata(for: .isoUserData) let fbtr = try await track.loadAssociatedTracks(ofType: .audioFallback) -

7:16 - Async loading: Old API

asset.loadValuesAsynchronously(forKeys: ["duration", "tracks"]) { var error: NSError? guard asset.statusOfValue(forKey: "duration", error: &error) == .loaded else { ... } guard asset.statusOfValue(forKey: "tracks", error: &error) == .loaded else { ... } let duration = asset.duration let audioTracks = asset.tracks(withMediaType: .audio) // Use duration and audioTracks. } -

8:09 - This is the equivalent using the new API:

let duration = try await asset.load(.duration) let audioTracks = try await asset.loadTracks(withMediaType: .audio) // Use duration and audioTracks. -

8:36 - load(_:)

let tracks = try await asset.load(.tracks) -

8:51 - Async filtering method

let audioTracks = try await asset.loadTracks(withMediaType: .audio) -

8:58 - status(of:)

switch status(of: .tracks) { case .loaded(let tracks): // Use tracks. -

9:18 - load(_:) again (returns cached value)

let tracks = try await asset.load(.tracks) -

9:49 - Assert status is .loaded()

guard case .loaded (let tracks) = asset.status(of: .tracks) else { ... } -

11:49 - Custom video composition with metadata: Setup

/* Source movie: - Track 1: Audio - Track 2: Video - Track 3: Video - Track 4: Metadata - Track 5: Metadata - Track 6: Metadata */ // Tell AVMutableVideoComposition about all the metadata tracks. videoComposition.sourceSampleDataTrackIDs = [4, 5] // For each AVMutableVideoCompositionInstruction, specify the metadata track ID(s) to include. instruction1.requiredSourceSampleDataTrackIDs = [4] instruction2.requiredSourceSampleDataTrackIDs = [4, 5] -

12:44 - Custom video composition with metadata: Compositing

// This is an implementation of a AVVideoCompositing method: func startRequest(_ request: AVAsynchronousVideoCompositionRequest) { for trackID in request.sourceSampleDataTrackIDs { let metadata: AVTimedMetadataGroup? = request.sourceTimedMetadata(byTrackID: trackID) // To get CMSampleBuffers instead, use sourceSampleBuffer(byTrackID:). } // Compose input video frames, using metadata, here. request.finish(withComposedVideoFrame: composedFrame) }

-