-

Detect people, faces, and poses using Vision

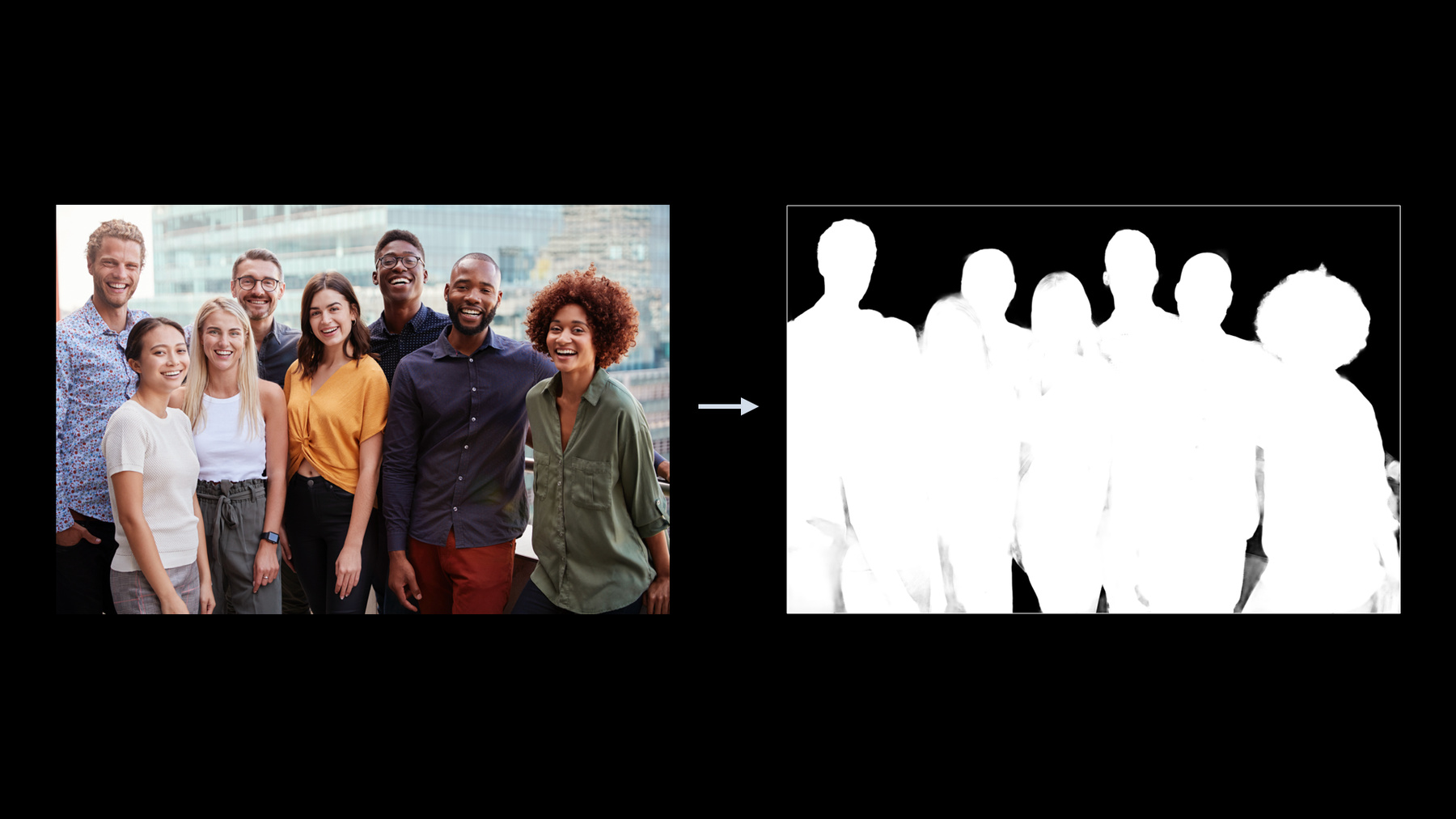

Discover the latest updates to the Vision framework to help your apps detect people, faces, and poses. Meet the Person Segmentation API, which helps your app separate people in images from their surroundings, and explore the latest contiguous metrics for tracking pitch, yaw, and the roll of the human head. And learn how these capabilities can be combined with other APIs like Core Image to deliver anything from simple virtual backgrounds to rich offline compositing in an image-editing app.

To get the most out of this session, we recommend watching “Detect Body and Hand Pose with Vision” from WWDC20 and “Understanding Images in Vision Framework” from WWDC19.

To learn even more about people analysis, see “Detect Body and Hand Pose with Vision” from WWDC20 and “Understanding Images in Vision Framework” from WWDC19.Ressources

Vidéos connexes

WWDC22

WWDC21

WWDC20

WWDC19

-

Rechercher dans cette vidéo…

-

-

8:13 - Get segmentation mask from an image

// Create request let request = VNGeneratePersonSegmentationRequest() // Create request handler let requestHandler = VNImageRequestHandler(url: imageURL, options: options) // Process request try requestHandler.perform([request]) // Review results let mask = request.results!.first! let maskBuffer = mask.pixelBuffer -

8:33 - Configuring the segmentation request

let request = VNGeneratePersonSegmentationRequest() request.revision = VNGeneratePersonSegmentationRequestRevision1 request.qualityLevel = VNGeneratePersonSegmentationRequest.QualityLevel.accurate request.outputPixelFormat = kCVPixelFormatType_OneComponent8 -

12:24 - Applying a segmentation mask

let input = CIImage?(contentsOf: imageUrl)! let mask = CIImage(cvPixelBuffer: maskBuffer) let background = CIImage?(contentsOf: backgroundImageUrl)! let maskScaleX = input.extent.width / mask.extent.width let maskScaleY = input.extent.height / mask.extent.height let maskScaled = mask.transformed(by: __CGAffineTransformMake( maskScaleX, 0, 0, maskScaleY, 0, 0)) let backgroundScaleX = input.extent.width / background.extent.width let backgroundScaleY = input.extent.height / background.extent.height let backgroundScaled = background.transformed(by: __CGAffineTransformMake( backgroundScaleX, 0, 0, backgroundScaleY, 0, 0)) let blendFilter = CIFilter.blendWithRedMask() blendFilter.inputImage = input blendFilter.backgroundImage = backgroundScaled blendFilter.maskImage = maskScaled let blendedImage = blendFilter.outputImage -

14:37 - Segmentation from AVCapture

private let photoOutput = AVCapturePhotoOutput() … if self.photoOutput.isPortraitEffectsMatteDeliverySupported { self.photoOutput.isPortraitEffectsMatteDeliveryEnabled = true } open class AVCapturePhoto { … var portraitEffectsMatte: AVPortraitEffectsMatte? { get } // nil if no people in the scene … } -

14:58 - Segmentation in ARKit

if ARWorldTrackingConfiguration.supportsFrameSemantics(.personSegmentationWithDepth) { // Proceed with getting Person Segmentation Mask … } open class ARFrame { … var segmentationBuffer: CVPixelBuffer? { get } … } -

15:31 - Segmentation in CoreImage

let input = CIImage?(contentsOf: imageUrl)! let segmentationFilter = CIFilter.personSegmentation() segmentationFilter.inputImage = input let mask = segmentationFilter.outputImage

-