-

Deploy machine learning and AI models on-device with Core ML

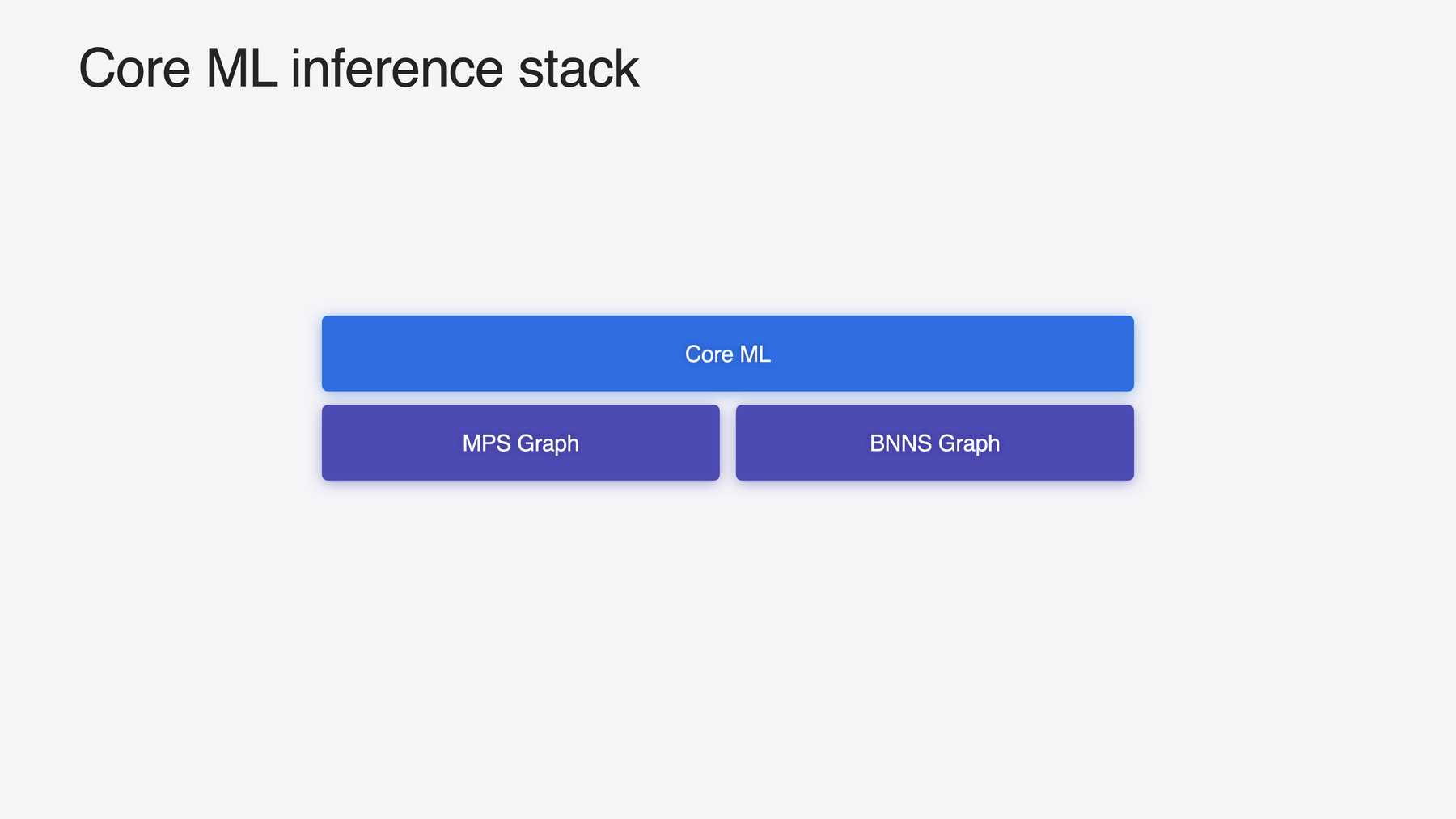

Learn new ways to optimize speed and memory performance when you convert and run machine learning and AI models through Core ML. We'll cover new options for model representations, performance insights, execution, and model stitching which can be used together to create compelling and private on-device experiences.

Capítulos

- 0:00 - Introduction

- 1:07 - Integration

- 3:29 - MLTensor

- 8:30 - Models with state

- 12:33 - Multifunction models

- 15:27 - Performance tools

Recursos

Videos relacionados

WWDC23

-

Buscar este video…

-