-

Render Metal with passthrough in visionOS

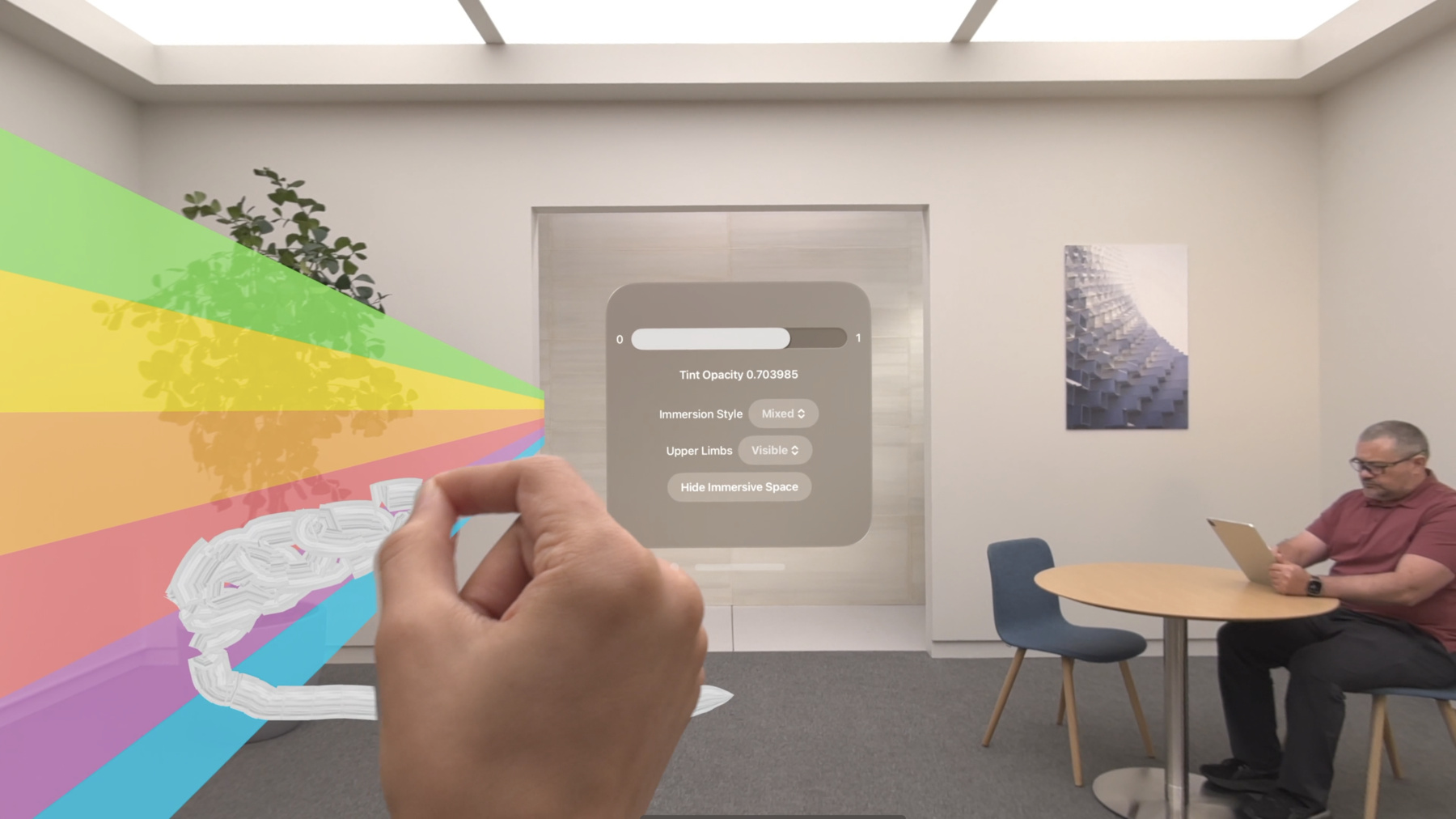

Get ready to extend your Metal experiences for visionOS. Learn best practices for integrating your rendered content with people's physical environments with passthrough. Find out how to position rendered content to match the physical world, reduce latency with trackable anchor prediction, and more.

Chapitres

- 0:00 - Introduction

- 1:49 - Mix rendered content with surroundings

- 9:20 - Position render content

- 14:49 - Trackable anchor prediction

- 19:22 - Wrap-up

Ressources

- Interacting with virtual content blended with passthrough

- Improving rendering performance with vertex amplification

- Rendering a scene with deferred lighting in Swift

- How to start designing assets in Display P3

- Forum: Graphics & Games

- Metal Developer Resources

- Rendering at different rasterization rates

Vidéos connexes

WWDC24

WWDC23

-

Rechercher dans cette vidéo…

-

-

3:07 - Add mixed immersion

@main struct MyApp: App { var body: some Scene { ImmersiveSpace { CompositorLayer(configuration: MyConfiguration()) { layerRenderer in let engine = my_engine_create(layerRenderer) let renderThread = Thread { my_engine_render_loop(engine) } renderThread.name = "Render Thread" renderThread.start() } .immersionStyle(selection: $style, in: .mixed, .full) } } } -

4:43 - Create a renderPassDescriptor

let renderPassDescriptor = MTLRenderPassDescriptor() renderPassDescriptor.colorAttachments[0].texture = drawable.colorTextures[0] renderPassDescriptor.colorAttachments[0].loadAction = .clear renderPassDescriptor.colorAttachments[0].storeAction = .store renderPassDescriptor.colorAttachments[0].clearColor = .init(red: 0.0, green: 0.0, blue: 0.0, alpha: 0.0) renderPassDescriptor.depthAttachment.texture = drawable.depthTextures[0] renderPassDescriptor.depthAttachment.loadAction = .clear renderPassDescriptor.depthAttachment.storeAction = .store renderPassDescriptor.depthAttachment.clearDepth = 0.0 -

9:08 - Set Upper Limb Visibility

@main struct MyApp: App { var body: some Scene { ImmersiveSpace { CompositorLayer(configuration: MyConfiguration()) { layerRenderer in let engine = my_engine_create(layerRenderer) let renderThread = Thread { my_engine_render_loop(engine) } renderThread.name = "Render Thread" renderThread.start() } .immersionStyle(selection: $style, in: .mixed, .full) .upperLimbVisiblity(.automatic) } } } -

13:37 - Compose a projection view matrix

func renderLoop { //... let deviceAnchor = worldTracking.queryDeviceAnchor(atTimestamp: presentationTime) drawable.deviceAnchor = deviceAnchor for viewIndex in 0...drawable.views.count { let view = drawable.views[viewIndex] let originFromDevice = deviceAnchor?.originFromAnchorTransform let deviceFromView = view.transform let viewMatrix = (originFromDevice * deviceFromView).inverse let projection = drawable.computeProjection(normalizedDeviceCoordinatesConvention: .rightUpBack, viewIndex: viewIndex) let projectionViewMatrix = projection * viewMatrix; //... } } -

18:27 - Trackable anchor prediction

func renderFrame() { //... // Get the trackable anchor and presentation time. let presentationTime = drawable.frameTiming.presentationTime let trackableAnchorTime = drawable.frameTiming.trackableAnchorTime // Convert the timestamps into units of seconds let devicePredictionTime = LayerRenderer.Clock.Instant.epoch.duration(to: presentationTime).timeInterval let anchorPredictionTime = LayerRenderer.Clock.Instant.epoch.duration(to: trackableAnchorTime).timeInterval let deviceAnchor = worldTracking.queryDeviceAnchor(atTimestamp: devicePredictionTime) let leftAnchor = handTracking.handAnchors(at: anchorPredictionTime) if (leftAnchor.isTracked) { //... }

-