-

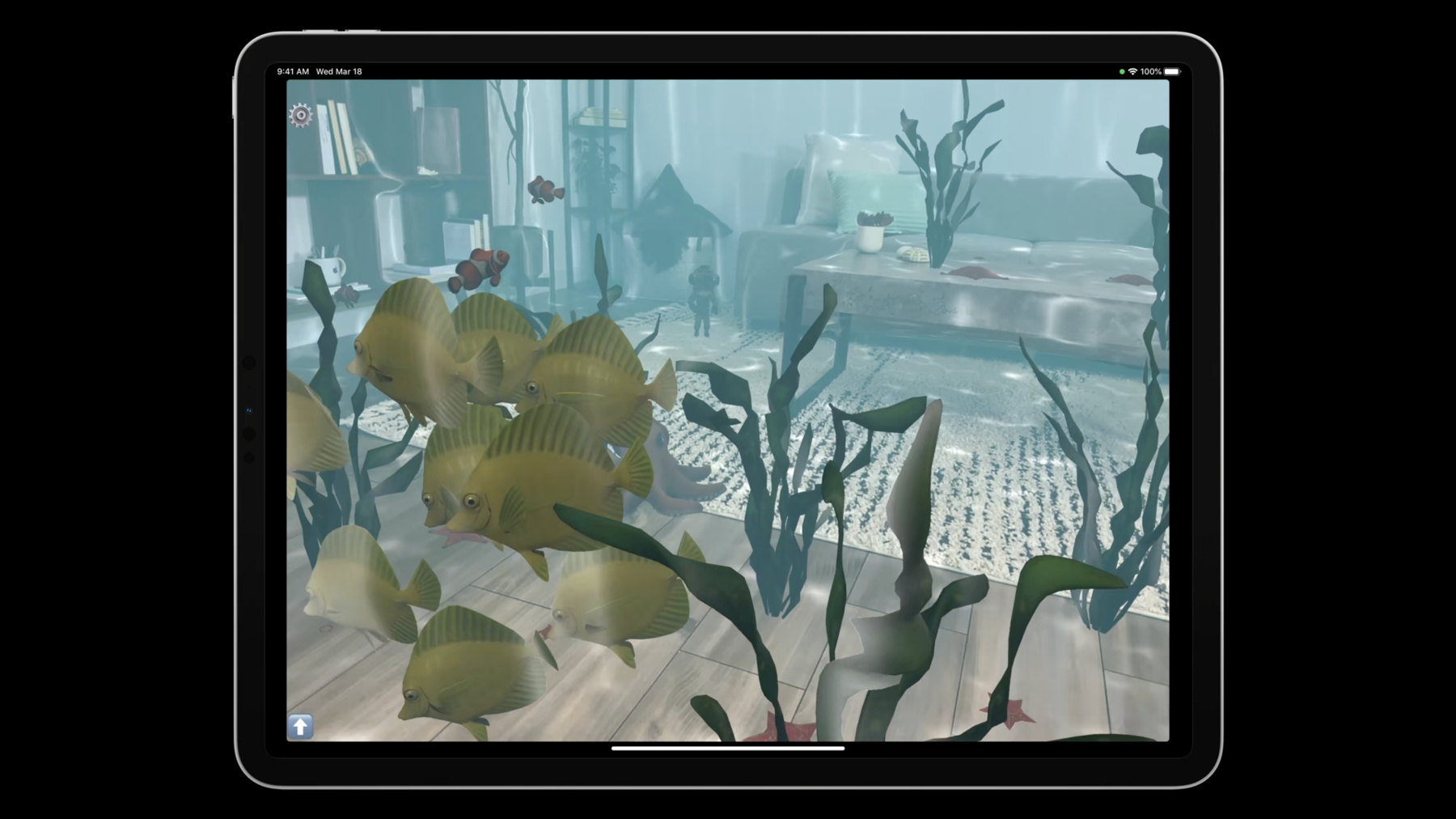

Dive into RealityKit 2

Creating engaging AR experiences has never been easier with RealityKit 2. Explore the latest enhancements to the RealityKit framework and take a deep dive into this underwater sample project. We'll take you through the improved Entity Component System, streamlined animation pipeline, and the plug-and-play character controller with enhancements to face mesh and audio.

Recursos

- Creating an App for Face-Painting in AR

- Building an immersive experience with RealityKit

- Applying realistic material and lighting effects to entities

- PhysicallyBasedMaterial

- Explore the RealityKit Developer Forums

- Creating a fog effect using scene depth

- RealityKit

Videos relacionados

WWDC23

WWDC22

WWDC21

WWDC20

WWDC19

-

Buscar este video…

-

-

7:10 - FlockingSystem

class FlockingSystem: RealityKit.System { required init(scene: RealityKit.Scene) { } static var dependencies: [SystemDependency] { [.before(MotionSystem.self)] } private static let query = EntityQuery(where: .has(FlockingComponent.self) && .has(MotionComponent.self) && .has(SettingsComponent.self)) -

8:34 - FlockingSystem.update

func update(context: SceneUpdateContext) { context.scene.performQuery(Self.query).forEach { entity in guard var motion: MotionComponent = entity.components[MotionComponent.self] else { continue } // ... Using a Boids simulation, add forces to the MotionComponent motion.forces.append(/* separation, cohesion, alignment forces */) entity.components[MotionComponent.self] = motion } } -

11:58 - Store Subscription While Entity Active

arView.scene.subscribe(to: CollisionEvents.Began.self, on: fish) { [weak self] event in // ... handle collisions with this particular fish }.storeWhileEntityActive(fish) -

12:36 - SwiftUI + RealityKit Settings Instance

class Settings: ObservableObject { @Published var separationWeight: Float = 1.6 // ... } struct ContentView : View { @StateObject var settings = Settings() var body: some View { ZStack { ARViewContainer(settings: settings) MovementSettingsView() .environmentObject(settings) } } } struct SettingsComponent: RealityKit.Component { var settings: Settings } class UnderwaterView: ARView { let settings: Settings private func addEntity(_ entity: Entity) { entity.components[SettingsComponent.self] = SettingsComponent(settings: self.settings) } } -

21:26 - FaceMesh

static let sceneUnderstandingQuery = EntityQuery(where: .has(SceneUnderstandingComponent.self) && .has(ModelComponent.self)) func findFaceEntity(scene: RealityKit.Scene) -> HasModel? { let faceEntity = scene.performQuery(sceneUnderstandingQuery).first { $0.components[SceneUnderstandingComponent.self]?.entityType == .face } return faceEntity as? HasModel } -

22:03 - FaceMesh - Painting material

func updateFaceEntityTextureUsing(cgImage: CGImage) { guard let faceEntity = self.faceEntity else { return } guard let faceTexture = try? TextureResource.generate(from: cgImage, options: .init(semantic: .color)) else { return } var faceMaterial = PhysicallyBasedMaterial() faceMaterial.roughness = 0.1 faceMaterial.metallic = 1.0 faceMaterial.blending = .transparent(opacity: .init(scale: 1.0)) let sparklyNormalMap = try! TextureResource.load(named: "sparkly") faceMaterial.normal.texture = PhysicallyBasedMaterial.Texture.init(sparklyNormalMap) faceMaterial.baseColor.texture = PhysicallyBasedMaterial.Texture.init(faceTexture) faceEntity.model!.materials = [faceMaterial] } -

23:09 - AudioBufferResource

let synthesizer = AVSpeechSynthesizer() func speakText(_ text: String, forEntity entity: Entity) { let utterance = AVSpeechUtterance(string: text) utterance.voice = AVSpeechSynthesisVoice(language: "en-IE") synthesizer.write(utterance) { audioBuffer in guard let audioResource = try? AudioBufferResource(buffer: audioBuffer, inputMode: .spatial, shouldLoop: true) else { return } entity.playAudio(audioResource) } }

-