-

Expanding the Sensory Experience with Core Haptics

Core Haptics lets you design your own haptics with synchronized audio on iPhone. In this two part session, learn essential sound and haptic design principles and concepts for creating meaningful and delightful experiences that engage a wider range of human senses. Discover how to combine visuals, audio and haptics, using the Taptic Engine, to add a new level of realism and improve feedback in your app or game. Understand how to create and play back content, and where Core Haptics fits in with other audio and haptic APIs.

Resources

- Updating Continuous and Transient Haptic Parameters in Real Time

- Playing a Custom Haptic Pattern from a File

- Playing Collision-Based Haptic Patterns

- Core Haptics

- Human Interface Guidelines: Playing haptics

- Apple Design Site

- Presentation Slides (PDF)

Related Videos

WWDC20

WWDC19

WWDC18

WWDC17

-

Search this video…

Welcome.

Sound has long been an incredible part of creating a truly great app. Whether that's creating an atmospheric sound bed for your games or drawing attention to an important alert or notification. The addition of haptics adds a whole new dimension to this experience -- touch.

Today's session is a story in two parts, design and development. And first, I'd like to start by introducing Hugo and Camille and ask them to come to the stage for their insights and guidance on designing great haptic experiences for your apps.

I'm sure you're familiar with that sound.

It's been part of our life for years. In 2019 though, I think we can do better. I'm Camille Moussette, interaction designer on the Apple design team. And I'm Hugo Verweij, sound designer on the design team.

This session is about designing great audio haptic experiences. Our goal with this talk is for you to be inspired and leave you with practical ideas about how to design great sound and haptics when used to together in the right way can bring a new dimension to your app.

During the next 30 minutes, we'll talk about three things. First, we'll introduce what is an audio haptic experience.

Then we'll look at three guiding principles to help you design those great experiences.

Lastly, we'll look at different techniques and practical tips to make those experiences great and truly compelling.

So, what is an audio haptic experience? Well, let's start by listening to a sound.

Okay. Now let's lower this sound. What happens if I lower it even further? Whoa. It's so low I can't hear it anymore. You know, our ears just don't register it anymore. But, if you would put your finger on the speaker, you could still feel it move back and forth. We designed a taptic engine specifically to play these low frequencies that you can only feel.

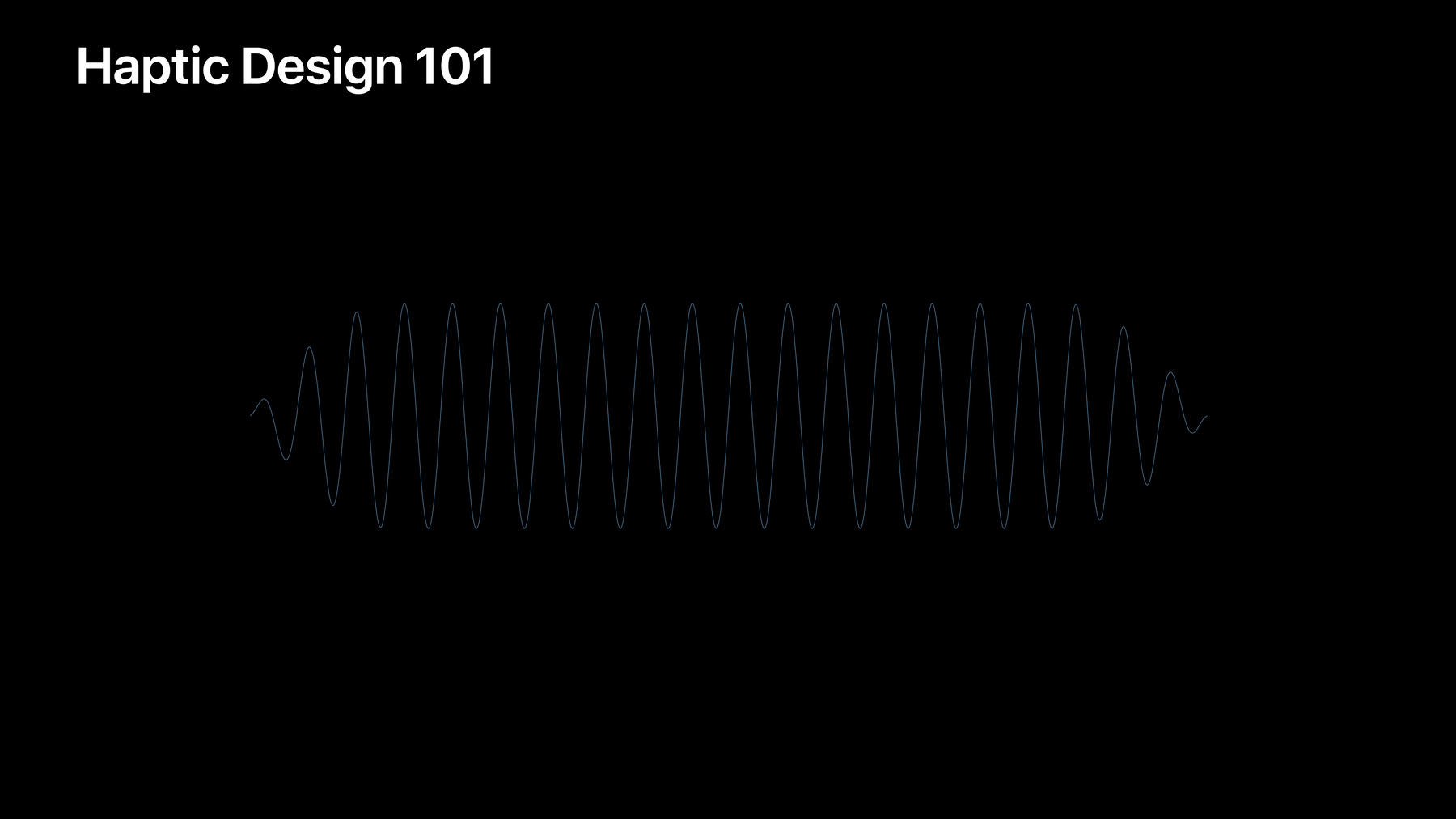

Here it is in the iPhone and next to it the speaker module. The haptic sensations from the taptic engine are synchronized to the sounds coming from the speaker, and the result is what we call an audio haptic experience. But haptic sensations are meant to be felt, so because we are presenting this on stage, and on screen we need your help in imagining what this would feel like. We'll do our best to help you by visualizing the haptics like this or by playing a sound that resembles the haptic, like this.

We will also visualize these experiences on the timeline, and Camille will tell you some more about how that's done in a quick haptics design primer.

IOS 13 introduced a new API for designing your own custom haptics. It's called Core Haptics. This new API allows you developers to use the taptic engine fully in iPhone.

The taptic engine is capable of rendering a wide range of experiences and can generate a custom vibration like this, it looks like this, and it should sound and feel like this . So, as you've seen, we're using a wave form and sound to represent haptics. As Hugo said, you need to imagine this in your hand as a silent experience. This should be felt and not heard. So, we can play these continuous experiences.

We can also have something that's more shorter and compact. It's a single cycle, and we call this experience a transient.

It's much more momentary, and it feels like an impact or a strike or a tap. Very momentary and then we actually can refine it further. Moving forward, we'll use basic shapes to represent haptics in different patterns. So, our transient becomes a simple rectangle, and because our taptic engine is an exceptional piece of haptic engineering, we can modulate the experience in different ways.

First, we can modulate the intensity or the amplitude. We can also make it feel more round or soft.

At the other extreme, we can make it more precise and crisp.

So this experience is possible with the taptic engine.

So, in the end, this completes our quick introduction to haptic design and what Core Haptics API is all about.

We have one intensity you can modulate and another design dimension, haptic sharpness, that you can, you are in control for two types of event, continuous and transient. Now, let's look at the three guiding principles that we want to share with you today. First is causality.

Then we have harmony and lastly we have utility.

These concepts or approaches are used throughout the work that we do at Apple, and we think they can help you as well in your own app experience. For each of them, we'll look at the concept and explain through a few examples. Let's get started.

Causality.

Causality is about for feedback to be useful it must be obvious what caused it. So, imagine being a soccer player kicking this ball. What would the experience feel like? There is a clear, a clear relationship, an obvious relationship between the cause, the foot colliding with the ball and the effect, the sound of the impact and the feel of the impact.

Now, what this experience sounds and feels like is determined by the qualities of the interacting objects, the material of the shoe, the material of the ball. And then the dynamics of the action. Is it a hard kick or a soft kick? And the environment, the acoustics of the stadium or the soccer field.

Because we are so familiar with these things, it would not make sense at all to use a sound that is very different. Let's try it out and take it way over the top.

Very strange, that doesn't really work.

Now when designing sounds for your experiences, think about what it would feel and sound like if what you interact with would be a physical object. As an example, let's look at the Apple Pay confirmation.

We wanted the sound and the haptics to perfectly match the animation on screen, the checkmark. So, where do we start? Well, what sounds do you associate with making a payment? What does money sound like? And what is the interaction of making a payment using Apple Pay? And of course we have to look at that animation of the checkmark on screen.

It should feel positive, like confirming a successful transaction. Here are a few examples of sounds that were candidates for this confirmation.

This is the first one [beeping sound]. This one is very pleasant, but it sounded a bit too happy and frivolous.

Now the next one worked really well with the checkmark of the animation .

But we felt that its character wasn't really right. It was a little too harsh.

And then there is this one, the one that we chose in the end and that you all know.

It's not too serious, and it's clearly a confirmation [beeping sound]. Okay, so we've got our sound. Now, onto the haptics.

Our first idea was to mimic the waveform of the sound because it matches perfectly, but after some experimentation, we found that two simple taps actually did a better job. I like to see these as little mini compositions where we have two instruments, one that you can hear and one that you can feel, the haptics.

They don't always necessarily have to play the same thing, but they do have to play in the same tempo.

Here they are together. Notice the lower sound indicating the haptics [beeping sound]. Okay, and this is then the final experience with the animation. Again, imagine feeling the taps in your hand when you pay.

Next, let's look at our second guiding principle, harmony.

Harmony is about things should feel the way they look, the way they sound. In the real world, audio haptics and visuals are naturally in harmony because of the clear cause and effect relationship. In the digital world though, we have to do this work manually. New experiences are created in an additive process. The input and output need to be specifically designed by you, the developer. Let's start with creating a simple interface with the visual. We have a simple sphere dropping and colliding with the bottom of the screen. Next, let's add audio feedback.

Now, we choose a sound that corresponds to the physical impact or the bounds of the sphere.

It needs to be short and precise and clear, but we also modulate the amplitude based on the velocity of the hit. Now, let's do additional work and introduce the third sense, haptic feedback . So imagine feeling that hit in your hand.

Again, we're trying to design in harmony the sphere hitting the bottom of the screen, so we choose a transient event with high sharpness. We also modulate the intensity to match the velocity of the bounce. We're not done yet. Because it's really important to think about synchronization between the three senses.

That's where the magic happens. That's where the illusion of the real ball colliding with the wall takes shape.

So here's an example where we broke the rules, and we introduced latency between the visual and the rest of the feedback.

It's clearly broken, and the illusion of a real bouncing ball is completely not there. So, harmony requires great care and attention from you but when done well can create very delightful and magical experiences.

Let's look at different harmony in terms of the notion between interaction, visuals, audio and haptics, in terms of qualities and overall behaviors. We'll look at a simple green dot on screen that will animate and think through what kind of audio, what kind of haptics makes sense with that green dot. So if we add a snappy pop or a different pulse, what kind of audio, what kind of haptics work with these visuals? What if we have a large object on screen? Does it sound different? Does it feel different than a tiny little dot? If we add different dynamic behavior, a different energy level, a pressing, pulsating dot that really calls for attention might want a different sound, different haptics. And lastly, something that feels calm or like a heartbeat warrant different type of feedback. So, think about the pace, the energy level, and the different quality that you're trying to convey in your app. Design feedback that tell the consistent and unified story.

Now I'll illustrate how the harmony principle helped us with designing sound and haptics for the Apple Watch crowd. We were all used to our phones and their old school vibes coming from them. When the Apple Watch came out as the first device with a taptic engine, it was the first device that could precisely synchronize sound and haptics.

Now for Series 4, haptics and a very subtle sound were added to the rotation of the crown. Remember the sharp and precise haptic that Camille described earlier? That was the one that we used for the crown.

But it was scaled down to match the small size of the crown, and so the haptics are felt in the finger touching the crown rather than on the wrist. For sound, we looked at the world of traditional watch making for inspiration.

We listened to and recorded all kinds of different watches, some of which sounded quite remarkable, like this one . And then there are other physical mechanical objects in the real world with a similar sound like bicycle hubs . We wanted to find a sound that would feel natural coming from a device like this.

We took these sounds as inspiration before we started crafting our own. And then this is the result. On your wrist, it sounds very quiet, just like you would expect coming from a watch. The perfect coordination between sound and haptics creates the illusion of a mechanical crown, and then to match this mechanical sensation, our motion team changed the animation so it snaps to the sound and the haptics when using the crown.

Let's look at that [clicking sound]. And I'll play it again. Look at the crown visualizing the haptics .

The result is a precise mechanical feel, which is in perfect harmony with what you see and what you hear.

Next, let's look at our third guiding principle, utility.

Utility is about adding audio and haptic feedback only when you can provide clear value and benefit to your app experience. Use moderation. Don't add sound and haptics just because you can. Let's look at a simple AR kit app that we made to illustrate this point. In this app, we place a virtual timer in the environment, and then the interaction is dependent on the distance of that virtual timer. Let's look at the video first.

So, in this app, we purposely designed audio and haptic feedback to complement the AR interaction and the most significant part of the user experience, meaning moving closer to the timer or moving away from the timer modulate the audio haptic experience. The three senses are coherent and unified. We refrain from adding other sound effects or haptic feedback to interacting with the different elements or other minor interaction in the app. It's often a good idea not to add sound and haptics. So, start by identifying possible locations in your app for audio haptic feedback, and then focus only on the elements where it can enhance the experience or communicate something important.

And then, are you tempted to add more? But maybe don't. It will overwhelm people, and it will diminish the value of what's really important. So, to recap, here are the guiding principles one more time.

We spoke about Causality, how it can help to think about what makes the sound and what causes the haptics.

About Harmony, how sound, haptics, and visuals work together in creating a great experience.

And Utility.

How looking at the experience from the point of view of the human using your app.

Next, let's look at the techniques and practical tips that we can use with these three guiding principles to create great audio haptic experience. First, a small recap about the primitives available in Core Haptics. We have two building blocks that you can work with. The first one is called transient, and it is the sharp compact, haptic experience that you can feel like a tap or a strike. The second one is a continuous haptic experience that extends over time. You can specify the duration how long it should last.

For transient, there are two design dimensions that is available and under your control. We have haptic intensity, and we have haptic sharpness to create something more round or soft at the lower value and something more precise, mechanical, and crisp at the upper bound.

Intensity changes the amplitude of the experience as expected. For continuous, we have the two similar design dimensions. We have sharpness and intensity, and we're able to create more organic or rumble-like experience that extends over time or something that is more precise and more mechanical at the upper values of sharpness. So there are many more details and capabilities in the Core Haptics API. Be sure to check out the online documentation. Now, when designing sounds, keep in mind what will work best with these haptics.

For a sharp transient, a chime with a sharp attack will probably work really well . But if we have a sound that's much smoother, using those same haptics, it's probably not such a good idea . So, for something like this, a continuous haptic ramping up and down probably works better.

But these are not hard rules. There is a lot of room to experiment, and sometimes you may find out that the opposite of what you thought would work is actually better, and this was the case for the Apple Watch alarm, that sounds like this.

For a sound like this, you may want to add a haptic like this because it pairs together perfectly . But can we make it better? Can we keep experimenting? Maybe flip it around and change the timing? This creates anticipation by ramping up the haptics and then quickly putting it off and playing the sound. There was a clear action reaction, and the sound plays as an answer to the haptic. This work really well for the Apple Watch alarm.

Next, it's pretty common to have a number of events back to back to convey a different type of experience.

In this case, we have four transient events, and we notice that when we present this to different people, they don't necessarily feel the first one. We have a first ghost haptics. So the sequence of four taps is actually reported as a triple tap only.

This could be a problem or an opportunity.

We could use this effect of ghost or not perceiving the first one completely as a priming effect. Let's look at the example of a third-party alert on watchOS. This is the sound and the haptics of that third party notifications.

So this is a really important notification that we want to make sure that the user perceived and acknowledged clearly. So in this case, we use our ghost effect or a primer in this case to wake up the skin and make sure that it's completely ready to feel what's to come.

Let's listen and feel it.

So, in this case, we have clear presentation and recognition of our main notification experience. Next, we can also create contrast between very similar experiences. Here is the sound for the left navigation cue on watchOS.

It sounds like this . With our harmony guiding principles, we end up with really nice haptics that pairs very well with that sound. So we have a series of double strikes that sound and feel like this.

Now if we look at the right cue, we have a similar sound but slightly different.

So we can notice the little difference between the left and the right on the audio, but if we continue to follow our harmony principle, with the haptics we end up with an identical pattern between the left and the right experience.

In this case, we want to add haptics. We double up the haptics from the double strikes, and then we have true contrast between left and right.

Let's listen and feel what, this experience .

Again, we have contrast between the left and the right for very similar audio experience.

So, by now you have quite a few tools to create your own experiences. We would like to show you one more example illustrating the points we made. This is a full screen effect in messages. The sound and haptics are perfectly synchronized to the animation, and it's a delightful moment for a special occasion. Let's look at it one more time.

Now, if you haven't yet, I encourage you to try this out on your own iPhone to experience the haptics yourself. And now, a few more thoughts to consider in addition to the guiding principles that we shared.

The best results come when sound, haptics, and visuals are designed hand in hand.

Are you an animator? Collaborate with the sound or interaction designer and vice versa. It's the best way to come to a unified experience.

Imagine using your own app for the very first time.

What would you like it to sound like or feel like? And then imagine using it a hundred times more.

Does it still help you to hear and feel these things or are you overwhelmed? Experience it and take away all the things that don't feel compelling or that are not useful. And don't be afraid to experiment. Try things out. Prototype.

We've seen that you may just come across something amazing by trying something new.

We're looking forward to seeing, hearing, and feeling what you will come up with in your own apps.

See this URL for more information. Thank you very much. All right. Thank you Hugo and Camille. So, now that we have a better idea of how to design our haptic experiences for our apps, now let's understand how we're going to take these principles and put them into practice in code. And to do that, Michael and Doug are going to walk through how we can take advantage of the new Core Haptic APIs. And to start that off, I'd like to introduce Michael to the stage.

Good evening. I'm Michael Diu from the interactive haptics team, and I'm looking forward to sharing the many advances in haptics in iOS 13 with you. Let's take a look at our agenda. First, we'll find out where we can use Core Haptics, how it fits in with other audio and haptic APIs.

We'll talk about the two groups of classes in the API and the basic dimensions and descriptors that we will use to describe our haptics and audio content. We're going to walk through the basic recipe to start playing out that content, and then we're going to move onto introducing dynamic parameters. And dynamic parameters are a way that you can customize your haptic patterns at playback time, in response to your user or your app's behavior. And, we're going to explore a new way to express, store, and share your audio haptics contents, a new file format we're calling the Apple Haptic Audio Pattern or AHAP. So let's get to it. First, what is Core Haptics? We can think of it as an event-based audio and haptic rendering API or synthesizer for iPhone. We can continue to use our other audio and haptics and feedback APIs like AV Audio Player and UIKit's UIFeedback Generator in parallel with Core Haptics.

You might be wondering, which iPhones can I use this on. With just one API and one file format, we will be able to access hundreds of millions of taptic engine equipped iPhones starting from iPhone 8 onward. And we've taken care that your haptic patterns will have the same feel across all of these products, so much so that you're going to be able to prototype and release just using one product. And these iPhones aren't equipped with just any old commodity actuator. They all have the Apple-designed taptic engine, which offers you that unique combination of power, a wide expressive range, and an unmatched precision and control and subtlety. Next, I'd like to talk about those of you who have already started adopting haptics on iPhone with UIKit's Feedback Generated APIs.

Now, Core Haptics is not a replacement for this API.

In most cases, you want to keep on using Feedback Generator especially for UIKit controls and adding haptics to that. With that API, you indicate the design intent for your event, whether that's a selection, an impact, or a notification, and you let someone else, Apple, worry about developing a vocabulary to express that and mixing the right modalities like audio, haptics, animation to communicate that message.

Now this API is also being improved in iOS 13. So please check out its documentation for more details. In contrast, Core Haptics is good when you want to be your own sound and haptic designer.

With it, you developed your own patterns, and you can have a lot more control over exactly what time it gets played, so you can synchronize with other APIs like an animation from Core Animation or a sound event from AV Audio Engine.

You have a much richer set of playback and modulation controls.

Now, UIKit is built on top of Core Haptics, so both APIs share the same low latency performance characteristics.

Now, designing your own haptic patterns is going to take more time, but when it allows you to do something that you couldn't otherwise do, and when it allows you to differentiate your app, then it's worth thinking about. Now, next I'd like to talk a bit more about those audio capabilities.

So, Core Haptics is also an audio API, and so that allows you to play short, synthesized, or custom waveform audio in synchronization tight sync with your haptics.

This type of audio haptic duality has been crucial to many of Apple's own haptic experiences like the haptic home button in iPhone 7, the haptic crown in Series 4 Watch, and the UIDatePicker, those scrolling wheels that you use to select dates and times and alarms and calendars.

And, you may not have realized that. You may not even have noticed that there was audio in these experiences, but if you were to cover up that audio once you take it away, you'll realize that it's an inseparable and integral part of that experience. So now, you can do the same with Core Haptics in your own apps. And I want to talk about some categories of apps, one huge category in particular, where you might want to think about Core Haptics, and that's games. So, imagine we are at the racetrack. We want to go into turbo mode. Let's imagine . When you've got the brute force message to deliver, think about using synchronized haptics and audio in your app to generate those visceral explosions and rumbles.

Now, another very nice application is simulate physical contact, to make your applications feel more realistic. Think about a tennis game.

You could have audio and haptic components where the pitch of your audio, the intensity of your haptics are proportional to how fast your swing is or how centered the ball lands in the middle of the racket. And you can even control how long the strings your racket will resonate for after the impact.

So, another great area to think about using Core Haptics is in augmented reality apps.

And there, if you're working in this space, you're already familiar with the benefits of having high visual fidelity paired with 3D audio, working in concert. Now, we can reach for that next level of emersion by considering how custom haptic feedback can ground our user gestures or respond to app, device, and AR object events. For example, moving your device around or moving your entire users around. As an inspiration, this year we've enhanced the swish sample code by using haptics that are modulated based on how fast you pull back the sling, how fast you pull back your phone. You're going to feel the tension building up as you stretch it back, and then the very satisfying thunk as you release.

I'd like to show you a video of this, and I'm going to use audio to represent just the haptics that you're going to feel. They're going to sound like this . Now, we're going to see the whole thing together, visuals and haptics, no regular audio.

So that was an example of how we can use haptics, sound, and visuals all synchronized together to enhance our AR experience. Now, these are just a few categories of apps, games, and AR that are ripe for creative explorations with haptics and their corresponding sounds. I'm sure you're going to think of many, many more. So, now let's get into how we can start expressing our content with Core Haptics.

There are just two groups of classes in Core Haptics. There's those to represent your content and those to play back that content. Let's take a closer look at the content side first. The basic indivisible content element in Core Haptics is called a CH Haptic Event.

Now each event has a type and a time, and optionally parameters that will customized its feel.

These events can overlap each other, and when they do, they mix.

And all events are grouped into a pattern.

Next, I'd like to talk about those types of events. Our first type is called the Haptic Transient. The Haptic Transient, I think of it as a gavel, it's a tricking motion. It's momentary and instantaneous. And then we have two continuous types.

We have Haptic Continuous and Audio Continuous. And there, I think of, for example, bowing a stringed instrument. It is longer than a transient. It can be, for example, used as a background texture, and you have a much richer set of knobs that you can use, for example, to modulate the resonance of it.

Lastly, we have the Audio Custom type, and the Audio Custom, as I mentioned earlier, is where you can provide your own audio to be played back in sync with the haptics.

Next, let's talk about some of those optional parameters. Our first event parameter is called haptic intensity, and it has an audio analog, audio volume, which you're probably already familiar with.

Now, with this parameter, you go from no output as you turn, and as you turn the knob from 0 all the way up to 1, you go to maximum output of the system. Our next parameter is called haptic sharpness. Now, haptic sharpness is a new concept. There's no physical analog to this, and there's also no audio analog. In this world, I want you to instead think of moving along in a perceptual space from a very round and organic feel at 0 all the way to a more crisp and precise feel at 1. And to help ground that a bit further, I'm going to use some examples from iOS 12.

The flashlight button on your lock screen is an example of a very high sharpness haptic, and the app switcher, that swipe up, that's an example of a more round, a lower sharpness haptic. As for the why, why were those two types of experiences, you know, sharp and not sharp, I'm going to refer you to our talk on audio haptic design. Now, we have several more types of event parameters, for example, that apply to audio, like pitch and pan, and for haptics, we have things that let you change those resonance and so forth. But these two, intensity and sharpness, will be enough to get us going. Now, to develop a feel for that dynamic range and precision of intensity and sharpness, we've got a sample code, the Palette, which allows you to try out these experiences for yourself. As you move, as you tap or you drag your finger around, you'll be accessing the sharpness axis as well as the intensity axis, and it's going to play out the corresponding continuous or transient haptic, as you do that.

This will help you get that intuition.

So, that was an introduction about where we can use Core Haptics and also how to specify our content. Now, let's invite Doug Scott, our Core Haptics architect, to get us started with playing back Core Haptics, playing back those patterns, and integrating Core Haptics into our apps. Please welcome Doug.

Thank you, Michael.

Good evening everyone. I am thrilled to be here to talk to you about integrating the Core Haptics API into your applications.

Before I show a demo and dive into the code, let's walk through the basic steps that your application will follow when you want to play a haptic pattern.

Creating your content is the first good step because this can be done at any point prior to the point where you need to use it. In this example, with load an NS dictionary into a haptic pattern. The dictionary might have been something that we stored in our application as part of our resources.

As we will see later, patterns can also be created right before they are to be played if they need to vary interactively in response to changes in your application. The next step is to create an instance of the haptic engine.

This should be done as soon as your application knows that it will be making use of haptics. Next, you create a haptic player for your haptic pattern. Each player is associated with a single pattern and a particular haptic engine. Starting the haptic engine tells the system to initialize the audio and haptic hardware in preparation for a request to play the pattern. At the moment that your application wants the pattern to play, you start the player. This can be done in two modes. The first, which we could call immediate mode, tells the system that you wish this pattern to play at the soonest possible moment with minimal latency.

The second, in scheduled mode, you handed a absolute timestamp, which tells the system that you want to synchronize this event with some other system, such as another audio player or a game event or a graphics event.

If you want to know when your pattern is finished playing, you can have the haptic engine notify you via callback when your player or players are done. Here, the engine calls back to the application, and the application can now choose to stop the haptic engine or can turn you on with the next haptic pattern.

Those are the basic steps. Now, let's see and example of an application which uses this system.

But before we do, we need to let you in on a secret. Demonstrating the use of an API, which generates tactile feedback presents a unique problem. You in the audience can't feel it. The way we handled this was by adding an audio equivalent for each haptic event into the output, which will let you hear the effect of the haptics. This application uses a simple physics engine to move the ball around the screen in response to the accelerometer. It generates haptic and audio feedback when the ball impacts the edges of the screen. The user has the sense they are feeling the impacts through the edges of the game wall as well as hearing them. The harder the ball hits the edge, the more intense the haptic and the louder the audio. Okay. Let's look at the code for this example to see how to integrate the Core Haptics API into your application. We'll see how event parameters are used to produce changes in haptics and audio.

The example here, all this code is taken from the sample code on the website, but it's been edited down to show the important points.

First, we important the Core Haptics module along with the other modules we need for the application. The CHHaptic Engine is declared as a member variable of our view controller, because we want to be able to control its lifetime and have it exist for the lifetime of the application. As discussed in our flow chart earlier, we set up the haptic engine in advance of when we want to use it. Here, we call a helper method at the point that the view has loaded. In the helper method, we began by creating the instance of the haptic engine and check for possible errors. The engine is assigned to our member variable so we can keep it around. It is optional but extremely useful to assign a closure to the engine's stop handler property. This will be called if the engine is stopped by some action other than the application itself asking it to. Some possible reasons that this might happen are an audio session interruption or the application being suspended. We finish this method by starting the haptic engine and checking for possible errors.

The engine will continue to run until the application or a possible outside action stops it.

Note that the application tracks whether or not the engine needs to be restated.

Typically, you might leave the engine running for the entire time that you have any view visible on the screen, which has haptic interaction.

Here's the location in the application where the simple physics engine let's us know that the ball has collided with the wall. In this example, we want to generate our haptic and audio pattern to interactively track the velocity of the ball. So the pattern player and its pattern are created at the moment they are needed.

This method is responsible for creating the pattern to be played in response to the ball collision.

In here, we will create a pattern with two events, one haptic and one audio. We create a haptic event of type haptic transient to produce that impactful feel, and we give it two event parameters, which configure the event's sharpness and intensity, which you've heard about already, based upon the velocity of the ball.

Then, we create the audio event, we type audio continuous, with a set of event parameters for volume and envelop decay, also calculated from the ball's velocity. The sustained parameter here assures us that the intensity of this event will die off to zero instead of continuing on for the length of the event. We create a pattern containing these two events, synchronized in time. Finally, we create the pattern player from this pattern and return it to this layer, back in the method that responds to the collision.

The final step is to start the pattern player at time CHHapticTimeImmediate, which indicates to play it back as soon as possible with minimal latency.

Notice that the app does not hold onto the instance of this player.

Its pattern is guaranteed to continue playing until it is finished so the application can simply fire and forget it. And that's the basic recipe for playing your content using a pattern that is created programmatically within your app's code.

Again, because this app is continuously interactive, we don't stop the haptic engineer until the game screen is not longer visible. Now, let's take a moment to talk about one of the most powerful capabilities of Core Haptics, Dynamic Parameters. Dynamic Parameters let's you increase and decrease the value of the existing event parameters for all active and upcoming events in a pattern as it plays. Dynamic Parameters take effect at the time stamp you provide.

You can adjust multiple different parameters at the same time or with any arbitrary time relationship. You can include dynamic parameters when you create your pattern or send them to your player in real time during playback. This allows you to use a single pattern to generate an infinite number of haptic and audio variations by adjusting the pattern dynamically. Let's take a look at an example. In this diagram on the bottom we have a haptic pattern that was designed with all haptic event intensities set to their maximum value. First half are haptic transients. Second half is a haptic continuous.

We'd like to reduce the intensity of all the game's haptics temporarily, for example, if a character was speaking in the game. I send a dynamic parameter for intensity with a value of 0.3 that takes effect at time 0.5 seconds. You can see that it reduced the intensity of the event at that time significantly to about a third of what it was unmodified.

Finally, let's look at another way to create patterns. So, what exactly is AHAP.

The Apple Haptic Audio Pattern is a specification for describing a Core Haptics pattern in a text-based format. It is built from nested key value pairs, which become quite familiar to you once you start working with the classes which make up the Core Haptics API.

It is the schema for the widely established JSON file format, which means you have already quite a number of different frameworks, which can read, write, and edit these, including such things as the Swift Codable Framework. AHAP makes it easy to share and edit haptic patterns because it is a format that all developers can agree on. Loading your haptic patterns from external AHAP files allows you to separate your content from your application code. Using the magic of a slide deck, we're going to create a simple AHAP file here. We start with a version string, which indicates which version of the system this pattern was designed for. Next, we add the key for our pattern, which will be an array of dictionaries. We add our first event dictionary to our pattern array. This event has two required key value pairs, a time in seconds at which the event should happen relative to the start of the pattern and the type of event. This is a haptic transient event starting as soon as the pattern starts. To this event, we add event parameters, which will effect only this event. These are stored in their own array of dictionaries.

We add an event parameter to control the intensity of the event and another to control its sharpness.

We can add a second event in the same fashion. This one starts at an offset of 0.5 seconds from the first and is of type Haptic Continuous. For the event parameters, we use the same as we had for the first event.

Events of type Haptic Continuous and Audio Continuous require an event duration in addition to the time and event type. This duration value is always specified in seconds. Here is a visual representation of the pattern we just created. You can see the two type of events, the Haptic Transient at the very beginning, the continuous later, with their relative timing and duration and their intensity and sharpness parameter values. That was a quick tour of AHAP. This diagram shows a summary of the AHAP file structure, a single pattern consisting of an array of event dictionaries, optional dynamic parameters, and optional use of parameter curves, which are an extension of dynamic parameters, which you can read about more with the information we have on the website.

You can find a full link to the AHAP specification on our sessions page. Also, on our sessions page, you'll find a code example that shows how to create, load, and play the patterns described by AHAP files. This haptic sampler app includes a range of patterns that highlight the subtlety, dynamic range, and audio haptic sync that's possible with the Core Haptics API. Thank you very much and now I'd like to return the stage to my colleague, Michael. Thanks Doug.

So, although we covered a lot of ground today, there is still much more to discover with Core Haptics.

Check out the online reference for details. Once you're up and running with the basics of specifying contents and playing that content, you'll probably be wondering about the design principles for these joint haptic audio patterns. You'll be wondering, do the rules, the guidelines for sound design carry over to haptic design? What are some common pitfalls I should be aware of? The good news is that our audio and haptic design teams have been doing this for years, and they've helped put together some advice and guidance in an updated human interface guidelines or HIG for Haptics as well as in the accompanying talk in WWDC this year. Check it out. So, let's recap. Today we talked about where haptics can help you reach for that next level of emersion and make your app interactions more effortless.

Having synchronized and complementary audio and haptics together is a particularly effective combination, but there haven't been APIs that allows you to actually do this. With iOS 13, we now have the necessary ingredients to create these rich multimodal experiences. We have the vocabulary to describe haptics and audio events and a file format, AHAP.

We've got a new performant API, Core Haptics, which is designed for low latency and real-time modulation. We've put together sample code, sample patterns, design guidelines and support from Apple.

And lastly, you've got an incredible audience, incredible hardware, where you can feel your haptics as you intended. A huge installed base of taptic engines that give you the most powerful, expressive, and precise haptics hardware available. So, please come on down to the labs on Thursday and Friday where you can check out some of these haptics samples that we showed today and discuss your own ideas for your apps. You'll also find all of these guidelines and reference online at our sessions page. I know you're going to have a lot of fun creating and using these haptic patterns in your apps. We can't wait to hear and feel what you come up with. Thank you and good night. [ Applause ]

-