-

Analyze hangs with Instruments

User interface elements often mimic real-world interactions, including real-time responses. Apps with a noticeable delay in user interaction — a hang — can break that illusion and create frustration. We'll show you how to use Instruments to analyze, understand, and fix hangs in your apps on all Apple platforms. Discover how you can efficiently navigate an Instruments trace document, interpret trace data, and record additional profiling data to better understand your specific hang.

If you aren't familiar with using Instruments, we recommend first watching "Getting Started with Instruments." And to learn about other tools that can help you discover hangs in your app, check out "Track down hangs with Xcode and on-device detection."Chapters

- 1:56 - What is a hang?

- 3:51 - What is instant?

- 4:39 - Event handling and rendering loop

- 8:25 - Keep main thread work below 100ms

- 9:15 - Busy main thread hang

- 14:26 - Too long or too often?

- 21:46 - LazyVGrid still hangs on iPad

- 24:31 - Fix: Use task modifier to load thumbnail asynchronously

- 25:52 - Asynchronous hangs

- 32:38 - Fix: Get off of the main actor

- 35:57 - Blocked Main Thread Hang

- 39:19 - Fix: Make shared property async

- 40:35 - Blocked Thread does not imply unresponsive app

Resources

Related Videos

WWDC23

- Demystify SwiftUI performance

- Meet RealityKit Trace

- Meet the presenter: Analyze hangs with Instruments

WWDC22

WWDC21

Tech Talks

WWDC20

WWDC19

WWDC16

-

Search this video…

♪ ♪ Joachim Kurz: Welcome to "Analyze Hangs with Instruments." My name is Joachim, and I am an engineer working on the Instruments team. Today, we want to take a closer look at Hangs. First, I'll give you an overview of what a hang is, and to do so, we'll need to talk about human perception. Then, I'll briefly talk about the event handling and rendering loop, as it forms the basis to understand how a hang is caused. Armed with this theoretical knowledge, we'll jump into Instruments and look at three different hang examples: a busy main thread hang, an asynchronous hang, and a blocked main thread hang. For each of these, I'll show you how to recognize them, what to look for when analyzing them, and how to know when to add other instruments to your document to learn more.

Before we start: for part of this session, it's helpful to be somewhat familiar with Instruments. If you have ever profiled an application with Instruments, you should be good to go. Otherwise, check out our 2019 session, "Getting Started with Instruments.” When dealing with hangs: there are usually three steps. You find a hang, you then analyze a hang to understand how it happens, and then you fix it, (and verify it is actually fixed).

Today we will assume you've already found a hang and focus on the analyzing part, as well as discussing some fixes.

If you want to know more about finding hangs, take a look at our session, "Track down hangs with Xcode and on-device detection" from WWDC22. It covers all our tools for finding hangs, including: Instruments, On-device Hang Detection, which you can enable in the iOS Developer settings, and Xcode Organizer.

Today, we'll use Instruments to analyze a hang we've already found. To better understand hangs, let's talk about human perception and turn on the light.

We need a light bulb and a cable. Ah, much better. Like a lamp should, it turned on when I plugged in the cable. And when I pull it out again, it shuts off. Instantly.

But what if there was a delay? I plug it in. And here it took a moment to turn on. Even weirder, the same thing happens when I pull the cable out again. The delay between the cable being plugged in and the light turning on was only 500 milliseconds. But it already makes you wonder what's going on inside this box. It doesn't feel quite right that the lamp doesn't turn on and off directly.

However, in some other circumstances, a 500 millisecond delay might be OK. What kind of delay is acceptable depends on the circumstances. Let's say you overhear a conversation like this: "How do turtles communicate?" "Shell-phones." Here, we had a delay of one second between question and answer. And that felt totally natural. But this doesn’t: Why is that? The conversation between the turtle and the unicorn is a request-response style interaction, but plugging in a lamp is directly manipulating a real object. Real objects react instantly. If we simulate a real thing, it also needs to react instantly. If it doesn't, it breaks the illusion.

You had no issue with me claiming that I've got an actual lamp here when there was no delay between the cable being plugged in and the light turning on. But when there's a significant delay, your brain suddenly says, "Wait a moment, that's not how this stuff works." But how fast is instant? What delay is small enough for us not to notice? Here's our baseline with no delay.

How about 100 ms? To me, it felt like I noticed a tiny delay on turning it on, but not when turning it off, and only when I look closely. Your experience might be different. 100 ms is somewhat of a threshold. Significantly smaller delays aren't really perceivable anymore.

Let's try 250 ms.

250 ms doesn't feel instant anymore.

It's not slow, but the delay is definitely noticeable.

These kind of perception thresholds also inform our hang reporting. A delay below roughly 100 ms for a discrete interaction, like tapping a button, will usually feel instant. There are some special cases where you might want to go even below that, but it's a good goal to aim for. Above that, it depends on the circumstances. Until 250 ms, you might get away with it. Longer than that and it becomes noticeable, at least subconsciously.

It's a continuous scale, but above 250 ms, it certainly doesn't feel instant anymore. So most of our tools start reporting hangs by default starting at 250 ms, but we call these "micro hangs" as they are easy to ignore. Depending on the context, those might be OK, but often they are not. Everything above 500 ms we consider a proper hang. Based on this, we can roughly use these thresholds: If you want something to feel instant, aim for 100 ms or less in delay. If you have a request-response style interaction, 500 ms without any additional feedback might be OK.

But actually, we often have both in an interaction. Let's look at an example.

I just finished writing this email to all the colleagues who helped in preparing this session and I'm ready to send it. I move my mouse over to the Send button and click it and a moment later, the email window animates out to indicate it is being sent. What happened here is that you actually saw two things happening. First, the button highlighted, then there was small delay of 500 ms, then the email window animated out. But this delay felt fine because we already knew our request was received due to the button highlighting. We treat the button as a "real" thing and we expect it to update in "real" time, instantly.

So for the actual UI elements in our interface, we usually want to aim for this “instant” update.

To enable our UI elements to react “instantly” it is vital to keep the main thread free from non-UI work. To see why that is, let's take a closer look at the event handling and rendering loop to see how events are processed on Apple platforms and how user input leads to a screen update.

At some point, someone will interact with the device. We have no control over when that happens. First, there's usually some hardware involved, like a mouse or a touchscreen. It detects the interaction, creates an event, and sends it to the operating system. The operating system figures out which process needs to handle the event and forwards it to that process, for example, your app. In the app, it's the responsibility of the app's main thread to handle events. This is where most of your UI code runs. It makes a decision how to update the UI. Then this UI update gets sent to the render server, which is a separate process responsible for compositing the individual UI layers and rendering the next frame. Lastly, the display driver picks up the bitmap prepared by the render server and updates the pixels on screen accordingly. If you want to know more about how this works, we cover this in the documentation under "Improving app responsiveness.” For us, this rough overview is enough to understand what's going on. Now, when another event comes in during this time, it can usually be processed in parallel. But, if we look at how a single event travels through the pipeline, we still need to look at all the steps in sequence. The event processing steps before we get to the main thread and the render and update display steps AFTER are usually fairly predictable in their duration. When we encounter a significant delay in interaction, it is almost always because the portion on the main thread took too long or because something else is still executing on the main thread when the event comes in so we need to wait for it to finish before the event can be handled.

Given that every update to a UI element needs some time on the main thread, and we want these updates to happen within 100 ms to feel real, ideally, no work on the main thread should take longer than 100 ms. If you can be faster, even better. Note that long-running work on the main thread can also cause hitches, and lower thresholds apply to avoid hitches.

You can find more details about hitches in our Tech Talk "Explore UI animation hitches and the render loop" and our documentation about "Improving app responsiveness". Today, we focus on hangs.

One of my colleagues just found a hang in one of our apps, Backyard Birds, while working on a new feature. Let's profile the app with Instruments.

I have the Xcode project with the app here. All I need to do to profile the app in Instruments is click on the Product menu and then Profile and then Xcode will build the app and install it on the device, but not launch it.

Xcode will also open Instruments and configure to target the same app and device that were configured in Xcode. In Instruments' template chooser, I will choose the Time Profiler template, which is often a good starting point if you don't yet know what you are looking for and want to get a better understanding of what your app is doing.

This creates a new Instruments document from the Time Profiler template. Among others, this new document contains the Time Profiler instrument and the Hangs instrument, both of which will be useful for our analysis. I click the Record button in the top left of the toolbar to start the recording. Instruments launches the configured application and starts capturing data.

So here I have the Backyard Birds app. I tap on the first garden to go to the detail view. When I tap the "Choose Background" button in a moment, a bottom sheet should come up, showing me a selection of background pictures to choose from. Let me do that now. The button is pressed but seems stuck. It took quite a while for the sheet to appear. A severe hang.

Instruments has been recording all this. I'm going to stop the recording by clicking on the Stop button in the toolbar. Instruments has also detected the hang. It measures the hang duration and labels the corresponding intervals according to the severity. In this case, Instruments shows us a “Severe Hang” has happened. This fits what we are experiencing while using the app as well.

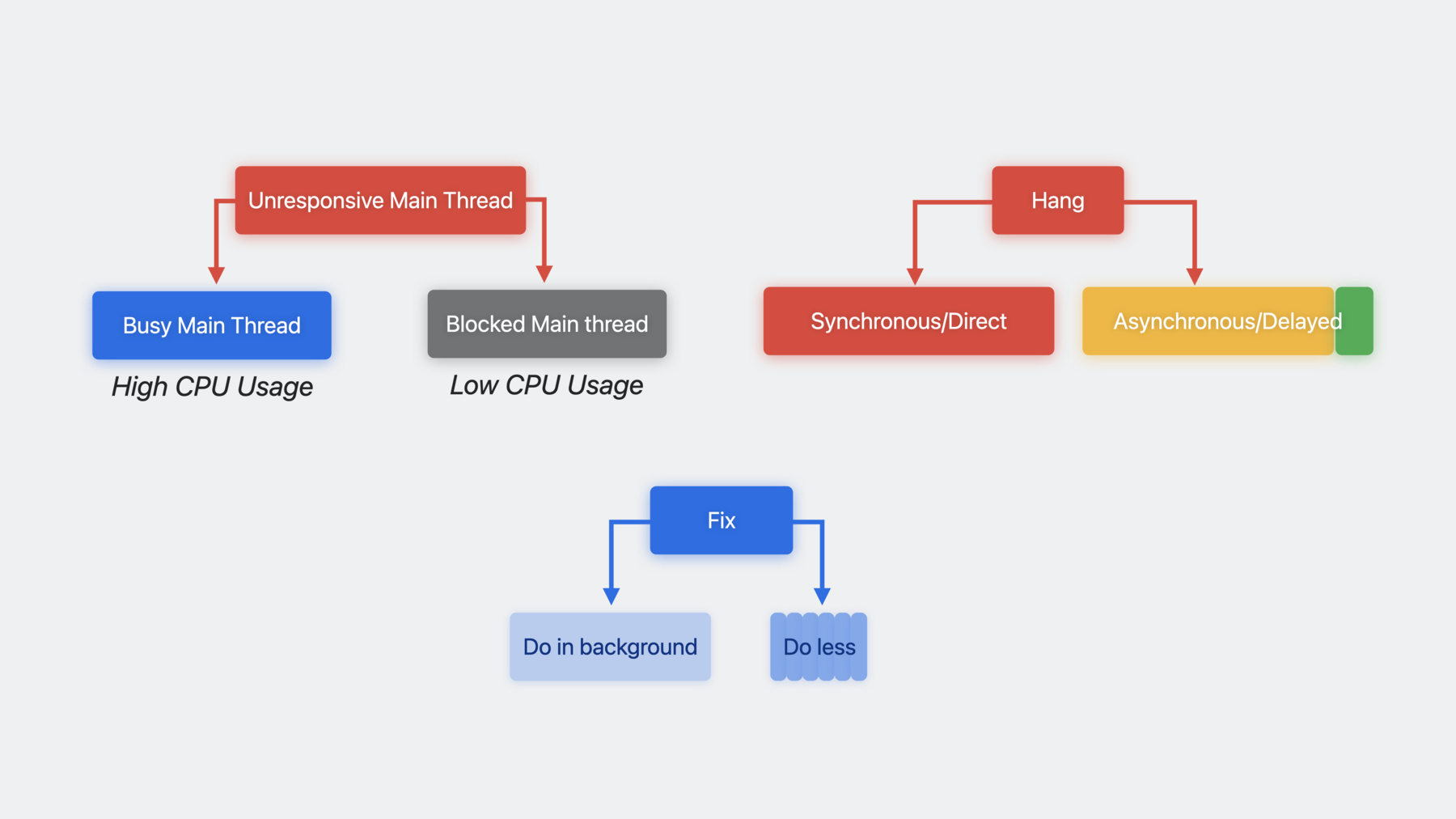

Instruments detected an unresponsive main thread and marks the corresponding interval as a potential hang. In our case, a hang did indeed occur. There are two main cases for an unresponsive main thread. The most simple case is that the main thread is simply still busy doing other work. In this case, the main thread will show a bunch of CPU activity. The other case is that the main thread is blocked. This is usually because the main thread is waiting for some other work to be done elsewhere. When the thread is blocked there will be little to no CPU activity on the main thread. Which case you have determines which steps you should take next to determine what's going on.

Back in Instruments, we'll need to find the Main Thread. The last track in the document shows the track for our target process. It has a small disclosure indicator on the left to indicate that there are subtracks. I click it to reveal a separate track for each thread in the process. Then, I'll select the Main Thread track here. This also updates the detail area to show the Profile view, which shows us a call tree of all functions which executed on the main thread during the whole recording time.

But we are only interested in what happened during the hang, so I secondary-click on the Hang-interval in the timeline to display a context menu. I could choose Set Inspection Range here, but I'm going to hold down the option key as well to get Set Inspection Range and Zoom instead.

This zooms in to the interval's range and filters the data displayed in the detail view to the selected time range.

While the CPU usage isn't 100% during the whole hang interval, it is still fairly high, with 60% to 90% CPU usage most of the time.

This is clearly a case of a busy main thread. Let's find out what all this CPU work is.

We could take a closer look at all the different nodes in the call tree now. But there's a great summary on the right side: the heaviest stack trace view.

When I click on a frame in the heaviest stack trace view, the call tree view updates to reveal this node. This also shows us that this method call is already pretty deep in the call tree.

The heaviest stack trace by default hides subsequent function calls that don't originate from your source code to make it easier to see where your source code is involved. We can apply a similar filter to the call tree view by clicking on the Call Tree button in the bottom bar and enabling the Hide System Libraries checkbox. This will filter out all functions from the system libraries and makes it easier to focus on our code.

The call tree view shows us that almost all our backtraces contain the "BackgroundThumbnailView.body.getter" call. It looks as if we should make our body getter faster, right? Not quite! So we know we have a busy main thread case, meaning the CPU is doing a lot of work. We also have found a method where a lot of CPU time is spent. But there are two different cases now. We might be spending a lot of CPU time in this method because the method itself runs for a long time. But it could also be that it is just called a lot of times, which is why it shows up here. How we should reduce the work on the main thread depends on which case we have.

A typical call stack is structured like this. There's a call from the main function, which calls out to some UI frameworks and a bunch of other stuff, and then, at some point, your code is called. If this function is only called once and that one call takes a long time, like our Turtle function here, then we want to look at what it calls. Maybe it does a lot of work. Then we can maybe do less of it. But it could also be that the method we are investigating is called a lot of times, like Unicorn here. And then, of course, the work it does is done over and over again as well. This is usually because there is some caller that calls the function, Unicorn, a lot of times-- for example, from a loop. Rather than optimizing what the focused function, Unicorn here, does, it might be more beneficial to investigate how we can call it less often.

That means the direction we need to look at next depends on the case we have.

For a long-running function, like our Turtle case, we want to look at its implementation and its callees.

We need to look further down. However, if a function is called a lot of times, like Unicorn, it is more beneficial to look at what is calling it and determine whether we can do so less often. We need to look further up. But Time Profiler cannot tell us which case we have. Let's assume the calls to Unicorn and Turtle happened right after another. Time Profiler gathers data by checking what's running on the CPU in regular intervals. And for each sample, it checks which function is currently running on the CPU. For this example, we would get both Turtle and Unicorn four times. But it could also be that this is a very fast Turtle, and Unicorn takes much longer, or other combinations. All of these scenarios would create the same data in Time Profiler.

To measure the execution time of a specific function, use os_signposts. We talked about how to do so in our 2019 session, "Getting Started with Instruments". There are also specialized instruments for various technologies that can tell you precisely what's going on. One of which is the SwiftUI View body instrument.

To add the SwiftUI body instrument, I click the plus button in the top right of the toolbar. This shows the Instruments library. This is the list of all the instruments, the Instruments application has to offer. There are a lot. You can even write your own custom instruments.

I'll enter "SwiftUI" in the filter field, and two instruments show up. I'll pick the "View body" instrument and drag it into the document window to add it. Now, because this instrument wasn't in the document when we last recorded, it has no data to display. But no problem. We'll just record again.

To save some time, I've done that already.

After I recorded with the SwiftUI View Body instrument in the document, the View Body track also shows some data now. There are a lot of intervals in the SwiftUI view bodies track. It's a little cramped, so I press Ctrl+Plus to increase its height. The SwiftUI View Body track groups the intervals by the library they are implemented in. Each interval is one view body execution. Let's zoom into our hang again.

In the second lane, there are a lot of orange intervals all labeled "BackgroundThumbnailView". This tells us precisely how many body executions there were and how long each one took. The orange color indicates that the runtime of that specific body execution took a little longer than what we are aiming for with SwiftUI. But the bigger problem seems to be how many intervals there are. In the detail view, there's a summary of all the body intervals. By clicking on the disclosure indicator next to Backyard Birds, I can reveal the individual view types in Backyard Birds. This shows me that BackgroundThumbnailView's body was executed 70 times with an average duration of about 50 milliseconds, leading to a total duration of over three seconds. This explains almost all of our hang duration. But 70 times seems excessive when we only need to show six images up front. This is a case where the body should be called less often, so we need to look at the callers of our body getter to find out why it's called this often and look at how to reduce it. To easily navigate to the relevant code, I select the main thread track again, secondary-click on the BackgroundThumbnailView.body.getter node in the call tree to show a context menu, and select "Reveal in Xcode".

This opens our body implementation right in Xcode. Let's find out how this view is used by secondary-clicking the type and choosing "Find", "Find Selected Symbol in Workspace". The first result in the Find navigator is already what we're looking for.

Here, our "BackgroundThumbnailView" is used inside a ForEach inside a GridRow inside another ForEach inside a Grid. Grid eagerly computes its whole content when it's created, so it will compute all background thumbnails even though we only need the first few. But there's an alternative: LazyVGrid. It only computes as many views as necessary to fill one screen. Lots of views in SwiftUI have lazy variants, which only compute as many views as necessary, and this can often be an easy way to do less work. However, the eager variants use much less memory when they need to render the same contents. Use the regular eager variants by default and switch to lazy variants when you find a performance issue related to doing too much work upfront.

Our WWDC session from WWDC 2020 about "Stacks, Grids, and Outlines in SwiftUI" introduces these lazy variants and describes them in more detail.

Let's profile this updated code.

I start recording and reproduce our hang by tapping the Choose Background button again. Now, this is much better. There was still a small delay, but not nearly as bad as before. Instruments confirms this. The hang we recorded now took less than 400 milliseconds. It's a micro hang. The "View Body" track also shows us that we now only got eight BackgroundThumbnail body executions, which fits our expectation. Maybe this is good enough. The microhang is not very noticeable. Let's make sure it also works well on other device types by profiling Backyard Birds on an iPad.

Here, I'm running Backyard Birds on an iPad. I'm already in the detail view. I tap the "Choose Background" button and it takes a long time for the sheet to appear. Once it appears, we can see why. There are a lot more thumbnails now because our screen is bigger and has more space. Instruments also recorded this hang.

Focusing the inspection range on our hang interval, we see more BackgroundThumbnailView bodies again. It makes sense. Now we need to render about 40 of them for a full screen as many more fit on screen. So the same code performed mostly OK on an iPhone but was slow on an iPad, simply because the screen was bigger. This is one of the reasons why you should also fix micro hangs. What you might see as a micro hang during testing at your desk might be a major hang for some of your users under different conditions. We now only render as many views as we need to fill the screen, so we exhausted our optimization potential in terms of calling this less often. Let's find out what we can do to make each individual execution faster.

I'll set the inspection range to a single BackgroundThumbnailView interval and switch back to the "Main Thread" track. Instruments shows our view body getter in the heaviest backtrace view and shows that it calls a "BackyardBackground.thumbnail" property getter.

This is the model object which provides the thumbnail image to display in our view. This thumbnail getter calls "UIImage imageByPreparingThumbnailOfSize:". So we seem to be computing a thumbnail on the fly here. That can take some time. In this case, about 150 milliseconds. This is work we should rather be doing in the background and not keep the main thread busy with.

To better understand what change we can make, I want to look at the context how the thumbnail getter is called.

I secondary click on the "BackgroundThumbnailView.body.getter" frame in the heaviest stack trace view and choose "Open in Source Viewer". This replaces the call tree view with a source viewer that shows the implementation of our body getter and annotates the lines of the implementation with the Time Profiler samples to show where our code spent how much time.

Our body implementation is really simple here; it just makes a new Image view with the thumbnail returned by the background. But this thumbnail call takes a long time. I have an idea how to write it differently. To jump to Xcode, I click on the menu button in the top right and choose "Open file in Xcode".

As before, this shows our source code in Xcode, ready to make changes.

What I want to do now is to load the thumbnail in the background, and while the loading is happening, display a progress indicator. First, we need a state variable to hold the loaded thumbnail.

Then, in the body, if we have the loaded image already, we are going to use it in the Image view. Otherwise, we show a progress view.

Now all that's left is loading the actual thumbnail. We want to start loading it once our view appears. That's what the ".task" modifier is for.

On appear, SwiftUI will start a task for us that will call the "thumbnail" getter and assign the result to our "image", which will update our view. Let's try it out! So here, with Instruments recording, I tap the "Choose Background" button and the sheet comes right up! Great! We saw our progress indicators, and a few seconds later, our thumbnails were displayed. This worked. Nice! But wait, Instruments is still showing a hang of almost two seconds. What happened here is that the hang happens slightly later now. Let me show you where it happens in the Backyard Birds app. I'm in the detail view already. In a moment, I'll tap the "Choose Background" button again and then I'll attempt to dismiss the sheet directly afterwards by tapping the done button. OK, "Choose Background" and "Done".

I tapped multiple times, but while the loading was happening, my taps were ignored. This is the hang that Instruments told us about. It happens after the sheet is displayed.

This is a slightly different type of hang. We already talked about the difference between the main thread being busy or being blocked. There is another way to look at hangs; what they are caused by and when they occur. We call these synchronous and asynchronous hangs.

Here, we have the main thread doing some work. If, when an event comes in, it takes a long time to process that event, then that's a hang. Let's say we get that under control and make sure our events are handled quickly. But maybe we just delayed some work to be done later on the main thread, or some other main thread work happens, and then an event comes in. Then that event has to wait for the previous work to be done before it can be handled. Then this still causes a hang, even though the code for each individual event handling finishes quickly. The way hang detection works on our platforms is that it looks at all work items on the main thread and checks whether they are too long. If so, it marks them as a potential hang. And it does that irrespective of whether there was user input because user input could come in at any time and then we would have an actual hang. This means hang detection also detects these asynchronous or delayed cases, but it only measures the potential delay, not the actually experienced delay.

We call asynchronous hangs asynchronous because they are often caused by "dispatch_async"ing work on the main queue or by a Swift Concurrency task that runs asynchronously on the main actor. But they could be caused by anything that causes work on the main thread. The first hang we saw was a synchronous hang. We tapped a button, that button tap causes long-running work, so the result is displayed late.

This most recent hang is an asynchronous or delayed hang. Tapping the Done button doesn't actually cause any expensive work by itself. But there was still work on the main thread preventing the tap from being handled. So while someone using the app might not even notice if they don't interact with the app during this time, we should still fix these cases, in case they do. Let's do that now.

So here I'm back in Instruments and I've already set the selection range to our async hang and zoomed in. In the summary view of the view body track, Instruments shows us that there were now 75 calls to our BackgroundThumbnailView's body getter.

This is because most thumbnail body getters are executed twice. SwiftUI creates 40 views with progress indicators to fill the grid. But then only 35 actually end up being displayed, and for those 35, we start loading the image, and once the image is loaded, the view updates and the body is called again, giving us a total of 75 body getter executions.

Even all 75 body getters in total took much less than one millisecond. So our body getters are fast now. That part worked. But we still have a hang. I'm going to select the "Main Thread" track again and in the heaviest stacktrace view, Instruments shows us that it's still the thumbnail getter that takes a long time on the main thread. This time, it's called by a closure inside our "BackgroundThumbnailView.body.getter", not the body getter directly. I double-click it, which is a shortcut to open the source viewer. Now this is exactly the code we expected to execute in the background due to being in the task modifier closure. This code should run at this time, but it should not run on the main thread. For issues like this, where Swift Concurrency tasks don't execute the way you expect them to, we have another useful instrument: the Swift Concurrency Tasks instrument.

I've already recorded the same behavior with the Swift Concurrency task instrument added. The Swift Tasks instrument adds a summary track to the document but what's more interesting for our case is the data it contributes to each thread track. Here, in the main thread track, there's a new graph from the Swift Tasks instrument. A single track can show multiple graphs. By clicking on the little downward arrow in the thread track header, I can configure which graphs to show. I can either choose another graph, like the Time Profiler's CPU Usage graph or hold down the Command key while clicking to select multiple. So now Instruments is showing both the CPU Usage and the Swift Tasks graph for this thread together. I'm gonna zoom in to our hang interval again. The "Swift Tasks" lane now clearly displays that there are a bunch of task executions on the main thread. Setting the inspection range to one of them and checking the heaviest stack trace in the Profile view confirms that this task is wrapping our thumbnail computation work.

So this work is correctly wrapped in a task like we wanted. But the task is executing on the main thread, which is unexpected.

Let me explain what's going on here. First, the body getter inherits the @MainActor annotation from SwiftUI's View protocol. Because "body" is annotated as "@MainActor" in the "View" protocol, when we implement it, the body getter is also implicitly annotated as @MainActor. Second, the ".task" modifier's closure is annotated to inherit the actor isolation of the surrounding context. So because the body getter is isolated to the MainActor, the task closure will be as well. So all the code running in this closure will be running on the main actor by default, and because the "thumbnail" getter is synchronous, it now synchronously runs on the main thread.

Swift Concurrency Tasks, by default, inherit the actor isolation of the surrounding context. The same behavior is true for SwiftUI's .task modifier. There are two ways to get off of the main actor. asynchronously calling an function that's not bound to the main actor allows the task to go off of the main actor. There may be cases where this is not feasible. Then, you can explicitly detach the task from the surrounding actor context by using "Task.detached", but it is a heavy handed approach and creating a separate task is more expensive than simply suspending an existing one. SwiftUI will also automatically cancel the task created via the task modifier when the corresponding view disappears, but this cancellation will not propagate to a new unstructured task, like Task.detached. To learn more, check out "Visualize and optimize Swift concurrency" from WWDC22 and our documentation on improving app responsiveness.

Because in our case we are already in an asynchronous context, and it's easy to make the thumbnail function nonisolated and asynchronous, we are going to pick option one.

Here, we have our thumbnail loading code. The issue is that this task will execute on the main actor due to inheriting the main actor isolation of the body getter and as the thumbnail getter is synchronous, it will also stay on the main actor. The fix is simple. We jump to the definition of the thumbnail getter, we make the getter async, then we go back to our view struct...

And because our getter is now async, we need to add await in front of it.

This should allow the "thumbnail" getter to execute on Swift Concurrency's concurrent thread pool instead of the main thread. Let's try it. I'm in the detail view again, and tap "Choose Background". Wow. That was fast! Not only was there no hang, but it also seemed like the overall loading was faster. I barely saw the progress views. Instruments confirms there was no hang now. There is some high CPU usage right here. Let me zoom into that. This is where the thumbnail loading now happens. Checking the main thread, we can confirm that all task intervals on the main thread are now very short. Scrolling down to the other thread tracks reveals that our Swift tasks are now executing on other threads in parallel instead of sequentially which makes much better use of our multi-core CPUs. This allows us to compute all the thumbnails in a few hundred milliseconds instead of almost 1.5 seconds. And during all this time, the main thread remains responsive, so we've fixed this one for good now.

We've now investigated, and fixed, an unresponsive main thread that was caused by the main thread being busy, which we could identify by the main thread using a lot of CPU during the hang. We've also experienced how a hang can be synchronous when it happens directly as part of the user interaction or asynchronous, where work that was scheduled on the main thread earlier causes an incoming event to be processed late and how Instruments can detect both cases. And we've fixed a hang by both doing less work and by doing other work we can't do less of in the background and only coming back to the main thread to update the UI. But there's one case we haven't yet looked at, a blocked main thread, in which case the main thread will use very little CPU. The other dimensions apply to a blocked main thread the same way, but other Instruments are necessary to analyze such a case.

Let's look at an example now.

Here I have a trace file from another hang. I already zoomed into the hang. It's a long one; several seconds. In the "Main Thread" track, the CPU Usage graph shows us that there is some initial CPU usage, but then, nothing. This is a clear case of a blocked main thread. We talked about how Time Profiler gathers its data by sampling what's running on the CPU.

When we zoom in, the CPU Usage graph even shows the individual samples. So each of these markers here is a sample that Time Profiler took. There are a few more samples to the right, but then nothing. But when I select a time range without samples, Time Profiler cannot tell us what's going on, as it didn't record any data during this time. So we need a different tool: the Thread States instrument. Like the other instruments before, you can add it from the Instruments library. I've already recorded the same hang again, this time with the "Thread State Trace" instrument added.

There's a new track for this instrument now. But like the "Swift Concurrency" instrument, the data that's interesting to us is actually in the "thread" tracks. So there is this really long "blocked" interval here in the main thread, over six seconds, which explains most of our hang duration. When I click in the middle of it, Instruments' time cursor moves there, which also updates the Narrative view in the detail area to show the entry for this blocked state. The Narrative view tells us the story of the thread; what it was doing, when, and why.

For the selected time, it tells us that the thread was blocked for 6.64 seconds and it was blocked because it was calling mach_msg2_trap, a syscall. On the right, there's a backtrace view again. But this backtrace is not a heaviest backtrace-- it's not some aggregation. It is the precise backtrace of the mach_msg2_trap syscall that caused the thread to be blocked. The function call is displayed as the leaf node at the bottom and its call stack is displayed above. The call stack tells us that the syscall happened as a result of allocating an MLModel, which in turn happened due to allocating an object of type "ColorizingService", which was called as part of a singleton property called "shared" on that colorizing service, which, in turn, was called by a closure in a body getter. If we double-click that closure, we jump to the Source Viewer again and can find the code where this was called. This line looks harmless, right? Let's take a closer look.

We are accessing the shared property of ColorizingService and storing it in a local variable. Except it's not harmless because the shared property creates the shared ColorizingService instance the first time it's accessed and that, in turn, kicks off the whole model loading machinery, which blocks the thread. So you might be tempted to say, "Let's just move this inside the async part after 'await'." However, counterintuitively, this does not solve the problem. The "await" keyword only applies to asynchronous function calls in the subsequent code. In our example the "colorize" function is "async". But the "shared" property is not. Because it's a static let property, it will be initialized lazily the first time it is accessed and that happens synchronously. The await keyword doesn't change that, so the synchronous call would still happen on the main thread. We can just fix this the same way as we did in our previous example, by making the shared property "async" as well to get off of the main actor. This is generally OK when you are waiting for work on your thread's behalf elsewhere where forward progress is made. However, another common reason for blocked threads are locks or semaphores. For best practices to keep in mind and what to avoid when using locks and semaphores with Swift concurrency, watch our session "Swift concurrency: Behind the scenes" from WWDC 21.

Before we wrap up, I want to talk about one other case related to blocked main threads. Here is the trace we looked at a moment ago. On the right is the hang we just investigated with the blocked main thread. But to the left of it, there are some other cases where the main thread is blocked for multiple seconds, but Instruments doesn't flag this as a potential hang. Here, the main thread is just asleep because there was no user input. From the operating system's perspective, it is blocked, but it's just saving resources by not running when there is nothing to do. As soon as input comes in, it will wake up and handle it. So to determine whether a blocked thread is a responsiveness issue or not, look to the Hangs instrument, not the thread states instrument.

So a blocked main thread does not imply an unresponsive main thread. Similarly, High CPU Usage also doesn't imply that the main thread is unresponsive. But if the main thread is unresponsive, that means it was either blocked or the main thread was busy. Our hang detection takes all these details into account and will only label intervals where the main thread was actually unresponsive and show them as potential hangs.

If you remember only one thing from this session, let it be this: whatever work you are doing on the main thread, it should be done in less than 100 milliseconds to free the main thread for event handling again. The shorter, the better. To analyze hangs in detail, Instruments is your best friend. Remember the distinction between a busy and a blocked main thread and remember that hangs can also be caused by asynchronous work on the main thread. To fix hangs, you want to do less work or move work to the background. Sometimes, even both. And doing less work often just means using the right API for the job. In general, measure first and check whether there is actually a hang before optimizing. There are certainly some best practices, but concurrent and asynchronous code is also much harder to debug. You'll often be surprised by all the things that are actually very fast and what actually ends up being slow.

Have fun finding, analyzing, and fixing all your hangs. Thank you for watching. ♪ ♪

-

-

19:38 - BackgroundThumbnailView

struct BackgroundThumbnailView: View { static let thumbnailSize = CGSize(width:128, height:128) var background: BackyardBackground var body: some View { Image(uiImage: background.thumbnail) } } -

19:58 - BackgroundSelectionView with Grid

var body: some View { ScrollView { Grid { ForEach(backgroundsGrid) { row in GridRow { ForEach(row.items) { background in BackgroundThumbnailView(background: background) .onTapGesture { selectedBackground = background } } } } } } } -

20:03 - BackgroundSelectionView with Grid (simplified)

var body: some View { ScrollView { Grid { ForEach(backgroundsGrid) { row in GridRow { ForEach(row.items) { background in BackgroundThumbnailView(background: background) } } } } } } -

20:26 - LazyVGrid variant

var body: some View { ScrollView { LazyVGrid(columns: [.init(.adaptive(minimum: BackgroundThumbnailView.thumbnailSize.width))]) { ForEach(BackyardBackground.allBackgrounds) { background in BackgroundThumbnailView(background: background) } } } } -

24:05 - BackgroundThumbnailView

struct BackgroundThumbnailView: View { static let thumbnailSize = CGSize(width:128, height:128) var background: BackyardBackground var body: some View { Image(uiImage: background.thumbnail) } } -

24:59 - BackgroundThumbnailView with progress (but without loading)

struct BackgroundThumbnailView: View { static let thumbnailSize = CGSize(width:128, height:128) var background: BackyardBackground @State private var image: UIImage? var body: some View { if let image { Image(uiImage: image) } else { ProgressView() .frame(width: Self.thumbnailSize.width, height: Self.thumbnailSize.height, alignment: .center) } } } -

25:26 - BackgroundThumbnailView with async loading on main thread

struct BackgroundThumbnailView: View { static let thumbnailSize = CGSize(width:128, height:128) var background: BackyardBackground @State private var image: UIImage? var body: some View { if let image { Image(uiImage: image) } else { ProgressView() .frame(width: Self.thumbnailSize.width, height: Self.thumbnailSize.height, alignment: .center) .task { image = background.thumbnail } } } } -

29:59 - BackgroundThumbnailView with async loading on main thread

struct BackgroundThumbnailView: View { static let thumbnailSize = CGSize(width:128, height:128) var background: BackyardBackground @State private var image: UIImage? var body: some View { if let image { Image(uiImage: image) } else { ProgressView() .frame(width: Self.thumbnailSize.width, height: Self.thumbnailSize.height, alignment: .center) .task { image = background.thumbnail } } } } -

31:41 - BackgroundThumbnailView with async loading on main thread (simplified)

struct BackgroundThumbnailView: View { // [...] var body: some View { // [...] ProgressView() .task { image = background.thumbnail } // [...] } } -

33:40 - BackgroundThumbnailView with async loading on main thread

struct BackgroundThumbnailView: View { static let thumbnailSize = CGSize(width:128, height:128) var background: BackyardBackground @State private var image: UIImage? var body: some View { if let image { Image(uiImage: image) } else { ProgressView() .frame(width: Self.thumbnailSize.width, height: Self.thumbnailSize.height, alignment: .center) .task { image = background.thumbnail } } } } -

33:59 - synchronous thumbnail property

public var thumbnail: UIImage { get { // compute and cache thumbnail } } -

34:03 - asynchronous thumbnail property

public var thumbnail: UIImage { get async { // compute and cache thumbnail } } -

34:08 - BackgroundThumbnailView with async loading in background

struct BackgroundThumbnailView: View { static let thumbnailSize = CGSize(width:128, height:128) var background: BackyardBackground @State private var image: UIImage? var body: some View { if let image { Image(uiImage: image) } else { ProgressView() .frame(width: Self.thumbnailSize.width, height: Self.thumbnailSize.height, alignment: .center) .task { image = await background.thumbnail } } } } -

38:52 - shared property causes blocked main thread

var body: some View { mainContent .task(id: imageMode) { defer { loading = false } do { var image = await background.thumbnail if imageMode == .colorized { let colorizer = ColorizingService.shared image = try await colorizer.colorize(image) } self.image = image } catch { self.error = error } } } -

39:00 - shared property causes blocked main thread (simplified)

struct ImageTile: View { // [...] // implicit @MainActor var body: some View { mainContent .task() { // inherits @MainActor isolation // [...] let colorizer = ColorizingService.shared result = try await colorizer.colorize(image) } } } -

39:10 - shared property causes blocked main thread + ColorizingService (simplified)

class ColorizingService { static let shared = ColorizingService() // [...] } struct ImageTile: View { // [...] // implicit @MainActor var body: some View { mainContent .task() { // inherits @MainActor isolation // [...] let colorizer = ColorizingService.shared result = try await colorizer.colorize(image) } } } -

39:25 - shared synchronous property after await keyword still causes blocked main thread

class ColorizingService { static let shared = ColorizingService() // [...] } struct ImageTile: View { // [...] // implicit @MainActor var body: some View { mainContent .task() { // inherits @MainActor isolation // [...] result = try await ColorizingService.shared.colorize(image) } } } -

class ColorizingService { static let shared = ColorizingService() func colorize(_ grayscaleImage: CGImage) async throws -> CGImage // [...] } struct ImageTile: View { // [...] // implicit @MainActor var body: some View { mainContent .task() { // inherits @MainActor isolation // [...] result = try await ColorizingService.shared.colorize(image) } } }

-