-

visionOSでのパススルーによるMetalのレンダリング

Metalの利用体験をvisionOSへと拡張しましょう。レンダリングしたコンテンツを、パススルーを利用してユーザーの物理環境に統合する際のベストプラクティスをご紹介します。レンダリングしたコンテンツを物理世界と一致するように配置する、トラッキング可能なアンカー予測で遅延を抑制するなど、さまざまなことを実現する方法を学べます。

関連する章

- 0:00 - Introduction

- 1:49 - Mix rendered content with surroundings

- 9:20 - Position render content

- 14:49 - Trackable anchor prediction

- 19:22 - Wrap-up

リソース

- Interacting with virtual content blended with passthrough

- Improving rendering performance with vertex amplification

- Rendering a scene with deferred lighting in Swift

- How to start designing assets in Display P3

- Forum: Graphics & Games

- Metal Developer Resources

- Rendering at different rasterization rates

関連ビデオ

WWDC24

WWDC23

-

このビデオを検索

こんにちは Pooyaです Appleでエンジニアをしています 今日ご紹介するのは 皆さんが visionOSを最大限に活用できるよう Metalの完全なイマーシブ体験を mixedイマーシブスタイルに拡張する方法です 嬉しいことに ここで使用するツールやフレームワークは おそらくみなさんが すでに慣れ親しんでいるものです

昨年 ARKit、Metal Compositor Servicesを使用して 独自のレンダリングエンジンを用いた 完全なイマーシブ体験を生む方法を紹介しました そして今年は Metalを活用することで 現実世界と想像の世界の境界を 曖昧にする mixedイマーシブスタイルのレンダリングを ご紹介します

皆さんはすでに Compositor Servicesを使用して Metalによるレンダリングエンジンを実装し ARKitをRealityKitでのレンダリングに 使用しているかもしれません Compositor Servicesを使用して レンダリングセッションを作成したら Metal APIで 美しいフレームをレンダリングし ARKitでワールドトラッキングや ハンドトラッキングにアクセスできます 今日のセッションでは Metal、ARKit CompositorServices APIを使用して mixedイマーシブスタイルを生み出すアプリを 作る方法をご紹介します 最初の手順では アプリでレンダリングしたコンテンツを 周囲の物理環境にシームレスに融合させます 次に 物理的環境を基準とした レンダリングコンテンツの配置を 改善する方法を説明します 最後に トラッキング可能なアンカーのポーズの 正確な予測時間を取得して 使用する方法を説明します 例えば 誰かの手と その手が扱っている バーチャルオブジェクトを トラッキングする場合などです では まずレンダリングしたコンテンツと 周囲環境を融合させてみましょう 今日のセッションを最大限に活用するには Compositor Services API Metal APIおよびMetalでの レンダリングに 慣れていることが望ましいです まだこれらを使ったことがない場合は 以前のビデオをぜひチェックしてください サンプルコードやドキュメントは developer.apple.com/jpでも参照できます では始めましょう

mixedイマーシブスタイルでは レンダリングされたコンテンツと ユーザーの周囲環境の両方が表示されます いくつかの手順を踏むことで このエフェクトを できるだけリアルに見せることができます まず 描画可能なテクスチャをクリアして 正しい値に設定します この値は 完全イマーシブ型で使用した値とは 異なります 次に レンダリングパイプラインで 正しい色と深度の値が 生成されていることを確認します 例えば 乗算済みアルファやP3色空間などです visionOSではそれらが必要になります

次に ARKitのシーン認識を利用して レンダリングしたコンテンツを 現実世界に固定し 物理シミュレーションを行います 最後に アプリの内容に合わせて 上肢の可視性のタイプを指定します アプリを mixedイマーシブスタイルにするための 最初の手順はとてもシンプルです immersionStyleの設定時に オプションの1つとして mixedを追加するだけです SwiftUIではデフォルトで ウインドウシーンが作成されます これは アプリの最初のシーンが ImmersiveSpaceであっても同じです PreferredDefaultSceneSessionRole キーをアプリケーションシーンマニフェストに 追加すれば デフォルトのシーン動作を変更できます Compositor Space Contentを持つ スペースを使用している場合は CPSceneSessionRoleImmersive Applicationを使用します また InitialImmersionStyleキーを シーンに合わせて調整することもできます mixedイマーシブスタイルで起動する場合は UIImmersionStyleMixedを 使用するとよいでしょう

レンダリングループの開始時に描画可能な テクスチャをクリアする必要があります クリアカラーの値は レンダリングに使用する イマーシブスタイルによって変わります これは典型的なレンダリングループです アプリは新しい 描画可能オブジェクトを取得し 読み込みとクリアのアクションで パイプラインの状態を構成して GPUワークロードをエンコードし オブジェクトを表示してコミットします

エンコードの段階ではまず 色と深度のテクスチャをクリアします レンダリングではテクスチャの全ピクセルに 触れない可能性があるためです 深度の値は必ずクリアして ゼロにする必要があります ただし 色テクスチャの正しい値は どのイマーシブスタイルを使用するかによって 異なります 完全イマーシブ型の場合は 色テクスチャを(0,0,0,1)に クリアする必要があります mixedイマーシブスタイルでは すべてゼロを使用します こちらがコードです ここでは renderPassDescriptorを作成し 色と深度のテクスチャを定義しました そして 組み込みと保存のアクションを調整し レンダリングの開始前に テクスチャがクリアされるようにしています 深度と色のアタッチメントは すべてクリアしてゼロにしました この例はmixedイマーシブスタイル用なので 色テクスチャのアルファ値は0に 設定されています

次に アプリがサポートする色の規則で正しく レンダリングされているか確認します

おそらくアルファブレンディングは すでにご存知のことでしょう これは前景と背景の2つのテクスチャを 現実的な方法で重ね合わせるために 使用されます visionOSのカラーパイプラインでは 乗算済みアルファという手法を使用しています つまり シェーダでカラーチャンネルを アルファ値で乗算してから Compositor Servicesに 渡すことを意味します

visionOSのカラーパイプラインは Display P3の色空間で機能します 色の一貫性を高めるため アプリでレンダリングしたコンテンツと パススルーの間では アセットはDisplay P3の色空間に ある必要があります 詳細については developer.apple.com/jpの ドキュメントをご覧ください

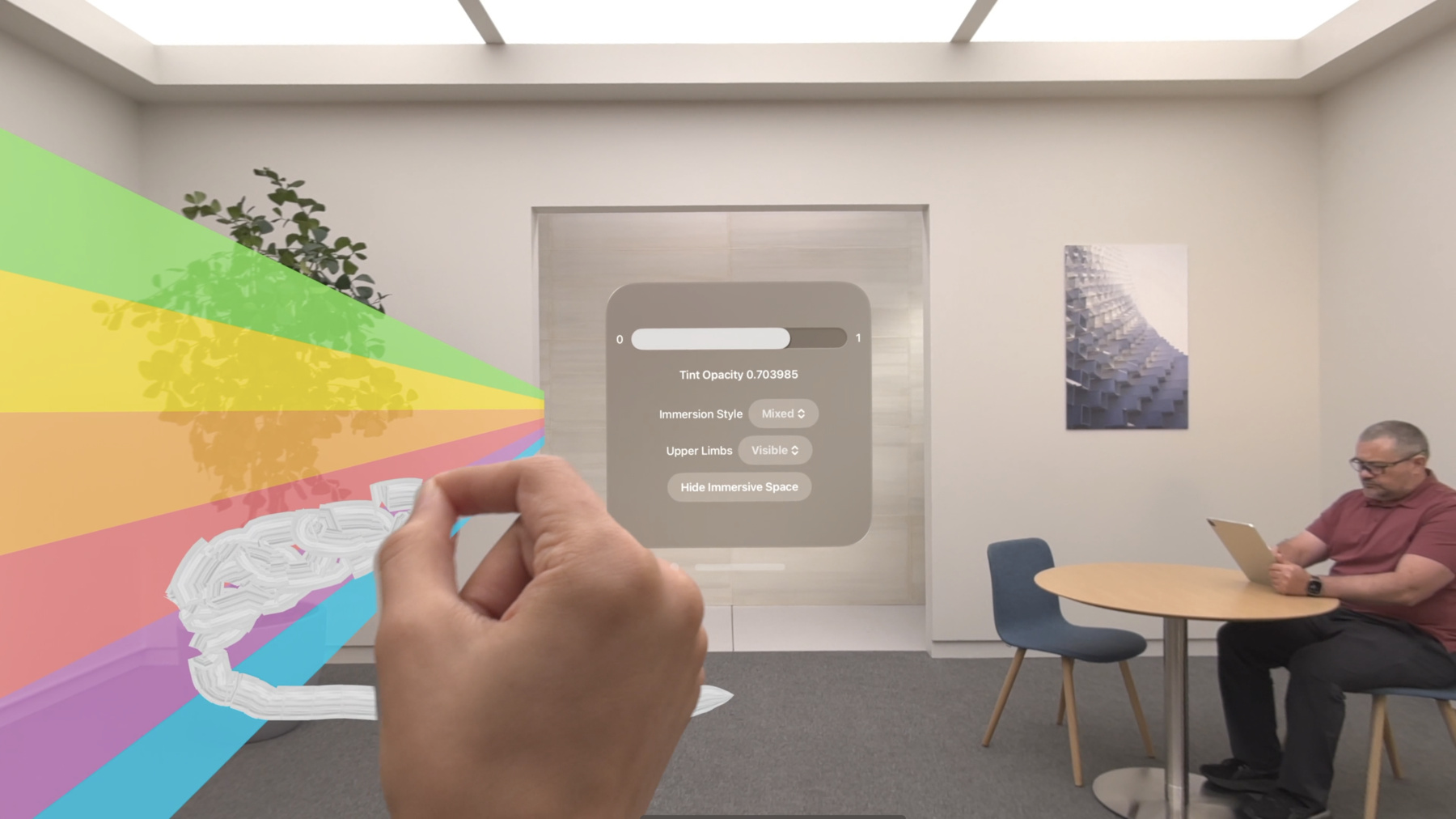

Compositor Servicesは 色と深度の両方のテクスチャを レンダラから取得して合成処理を行います 注目すべき点は Compositor Servicesは深度のテクスチャを 逆Z軸の規則に従っていると解釈することです 特定のピクセルのレンダリングされた コンテンツがディスプレイに表示されるのは アルファチャンネルが0より大きく 有効な視点深度の値がある場合です 視差効果を避けるために レンダラ側ですべてのピクセルに対して 正しい深度値を指定する必要があります また システムのパフォーマンス向上のため レンダラはアルファ値がゼロのピクセルに ゼロの深度値を渡す必要があります 次に ARKitからのデータを使用して レンダリングされたコンテンツに シーン認識を実装します mixedイマーシブスタイルでは レンダリングされたコンテンツがユーザーの 物理的な周囲の環境と共に表示されます よりリアルな体験を提供するために アプリのレンダリングロジックに シーン認識を統合します ARKitを使用すると レンダリングされたコンテンツを 現実世界の物体や表面に固定したり レンダリングされたコンテンツと 現実の物理的なオブジェクトとの間で リアルな相互作用のために必要な 物理シミュレーションを行ったり 物理的なオブジェクトの背後にある レンダリングコンテンツを隠すこともできます visionOSでのARKitの詳細については 「Meet ARKit for spatial computing」をご覧ください もうすぐ完成です 最後の手順はvisionOSで ユーザーの手や腕をどのように表示するか 指定することです これを「上肢の可視性」といいます mixedイマーシブスタイルでの 上肢の可視性には 3つの選択肢があります 1つ目は「Visible」です 「Visible」モードを選択すると オブジェクトの相対的深度に関わらず 常に手が最前面に表示されます

2つ目のモードは「Hidden」です このモードでは 手は常に レンダリングコンテンツの奥に隠れます そして 最後が 「Automatic」モードです このモードでは 手の可視性はレンダリングコンテンツの 深度によって変わります 手は オブジェクトの手前にある時は表示され 深度が増すにつれてフェードアウトします この処理はシステムが自動的に行います この仕組みを詳しく見てみましょう ヘッドセットを装着したユーザーが mixedイマーシブスタイルにいるとします シーンには赤い円と黄色い立方体の 2つのオブジェクトがあるとします また ユーザーの手も視界の中にあります シーンをレンダリングしたあと 描画可能な深度と色のテクスチャは このように見えます Compositor ServicesのAPIでは レンダラに期待される 深度テクスチャが逆Z値であることに 注意してください 上肢の可視性を 「Automatic」に設定した場合 Compositor Servicesは テクスチャに設定した深度値を使用します フレームワークはそれらの値を使用して 手や腕の表示方法を判断します 手がオブジェクトの手前にある場合は 完全に表示し オブジェクトの背後や内側にある場合は 部分的に非表示にします

コードではこのようになります upperLimbVisibilityを使用して アプリが「上肢の可視性」に どのモードを要求しているかを システムに知らせます ここではAutomaticモードを要求しました レンダリングされたコンテンツを 物理環境と視覚的に融合させたら コンテンツがその環境内に 実際に存在しているように 配置することが重要です そのためには まず コンテンツをワールド座標空間から 正規化デバイス座標空間に変換します ここではシーン認識型の 投影行列を利用して コンテンツの配置を改善する方法を 紹介します 次に Compositor Services フレームワークの入力に対応した 正規化デバイス座標規約について説明します そして最後に レンダリングエンジンで利用できる 代替の中間的な手法をご紹介します Vision Proの位置と向きに関して オブジェクトをレンダリングする場合は コンテンツを3Dワールド空間から 正規化デバイス座標空間とも呼ばれる 2.5D空間に変換します これは ProjectionViewMatrixと呼ばれます ProjectionViewMatrixは ProjectionMatrixとViewMatrixを 組み合わせたものです ViewMatrixは さらに 2つの変換に展開することができます まず 原点空間を デバイス空間に変換する deviceFromOriginです 2つ目は デバイス空間をビュー空間に変換する viewFromDeviceです

visionOS APIに合わせて ProjectionViewMatrixは ProjectionMatrixに originFromDeviceの逆行列を掛け そこにdeviceFromViewを掛けたものと 等しくなります deviceFromView変換は Compositor Services APIの cp_view_get_transformを呼び出すことで 取得できます レンダリングされたビューをデバイス空間に 変換するための行列が返されます OriginFromDeviceについては ARKit APIがこのデータを提供し ar_anchor_get_origin_from_anchorを 呼び出すと取得できます 今年、レンダリングコンテンツと 現実世界のオブジェクトとの位置関係を 改善するため シーン認識型の投影行列を 取得できるようになりました この行列はカメラの内部パラメータと リアルタイムのフレームごとの シーン認識要素を組み合わせて レンダリングされたコンテンツを 現実世界のオブジェクトとより良く配置させ mixedイマーシブスタイルの体験を 向上させます アプリがCompositor Servicesを通じて mixedイマーシブスタイルを要求する場合は この新しいAPIを使用する必要があります コンテンツをワールド空間から テクスチャ座標空間に変換すると アプリの描画可能な色と深度のテクスチャは レンダリング後にこのように見えます テクスチャ空間では X軸は左から右へ Y軸は上から下へ進みます 想定される深度の値は 逆Z軸の規則になっていることに 注意してください

さらに詳しく見ると visionOSがフォビエーションされた カラーパイプラインを使用していることを 考慮して 描画可能テクスチャは 画面空間と物理空間ではこのように見えます これは物理空間のサイズが 画面空間のテクスチャ寸法よりも 小さいことを意味しています 画面空間はユーザーが 値を認識する場所であり 物理空間は 実際の値が メモリに保存される場所です

フォビエーションされたレンダリングの 仕組みの詳細については developer.apple.comで 「Rendering at different rasterization rates」を参照してください ではCompositor Servicesが期待する 描画可能な正規化デバイス座標空間について もう少し詳しく見ていきましょう 正規化デバイス座標空間には 3つの軸があります 水平のX軸 垂直のY軸 そして垂直方向のWinding Orderです そのすべてがレンダラの方向に影響します

Compositor Servicesは色と深度の両方の テクスチャをレンダリングするために 使用される正規化デバイス座標空間が X軸が左から右へ Y軸が下から上へ Winding Orderが前から後ろになることを 期待しています ただし レンダリングエンジンが 別の正規化デバイス座標空間で 中間テクスチャをレンダリングする 可能性があります また Compositor Servicesは Y軸やWinding Orderを反転させるなど 様々な方法でシーン認識型の 投影行列を提供できます 最終的に レンダリングエンジンは 描画可能なテクスチャが Compositor Servicesの規則に 従っていることを 確認する責任があります では サンプルコードを使用して visionOS APIから プロジェクションビュー行列を構成する方法を 詳しく見てみましょう 指定されたpresentationTimeに対して deviceAnchorを取得したあと Compositor Services APIに deviceAnchorを渡します

そのdeviceAnchorを使用して 描画可能オブジェクトのビューを 反復処理することで ビューごとの projectionViewMatrixを計算します まず 対応するビューを取得します 次に ARKit APIを使用して originFromDeviceの変換を取得します さらに Compositor Services APIで deviceFromViewの変換を取得します この2つを使ってviewMatrixを作成し 原点からのビューを得ることができます computeProjection APIを呼び出し 指定されたビューに対して Compositor Servicesの規則で 投影行列を取得します .rightUpBack空間です そして最後に projectionにviewMatrix変換を乗算して projectionViewMatrixを構成します 注意点として アプリではこれらを フレームごとに取得する必要があり これらの変換を古いフレームから 再利用することはできません レンダリングコンテンツを配置したら 次に ユーザーがそれらを 操作する時のことを考えてみましょう アンカーは現実世界における 位置や方向を表します トラッキング可能なアンカーは 一連のエンティティのリストで システムはセッション中に トラッキング状態を取得したり 失ったりします トラッキング可能なアンカーは レンダリングコンテンツの配置に使用できます 例えば 人の手は トラッキング可能なアンカーエンティティです トラッキング可能なアンカーを設定するには まず ARKitへの接続を認証します セッションを利用して アクセスしたいデータの種類に対する 認証をリクエストします 次に プロバイダを設定します データプロバイダを利用すると アンカー変更などのデータ更新を ポーリングまたは観察して 最終的に ARKit APIから トラッキング可能なアンカーを取得できます フレームごとに レンダリングループ内で まず レンダラのロジックを更新し 次に Compositor Servicesに データを送信します では レンダリングループを よく見てみましょう 2つのステージで構成されています 1つ目は 更新ステージです インタラクションや 物理シミュレーションなど シミュレーション側のロジックが含まれます このステージは通常CPU側で行われます シミュレーションロジックを 正確に計算するためには トラッキング可能アンカーとデバイスのポーズの 両方が必要です 2つ目は 提出ステージで 最終結果がテクスチャにレンダリングされます この処理はGPU側で行われます アンカーのポーズ予測関数の精度は フレームの表示時間が 予測クエリが呼び出される時間に 近いほど向上します より良いアンカー結果を得るために アンカーとデバイスのポーズを再度照会して 結果ができるだけ正確であることを 確認します この図は様々なフレームセクションに対する タイミングの影響を示したものです Compositor Servicesからは 4種類のタイミングを取得できます 「フレームタイムライン」は アプリが行っている作業を表します

「最適な入力時間」はアプリが インタラクションや物理シミュレーションなど 重要でないタスクを完了する時間です 更新ステージの開始時に アプリがトラッキング可能アンカーと デバイスアンカーの両方を問い合わせます 「最適な入力時間」の直後が 遅延が重要となる入力をクエリして フレームのレンダリングを 開始するのに最適なタイミングです 「レンダリング期限」は CPUとGPUによるフレームの レンダリングが終了するべき時間です 「トラッキング可能アンカー時間」は カメラが周囲を認識する時間です この時間はアンカーの予測に利用する 必要があります 最後に「プレゼンテーション時間」は フレームがディスプレイに 表示される時間です この時間はデバイスの アンカー予測に使用される必要があります 例えば デバイスの視野内のシーンを 想像してください そこには 人の手に持たれている 赤い球体があります また 環境内には黄色い立方体もあります レンダリング後 デバイスのディスプレイでは このように見えるでしょう この黄色い立方体のような トラッキング可能アンカーにアタッチされない レンダリングコンテンツの場合は 表示時間のデバイスのポーズを照会すれば デバイス変換に対する相対的な変換を 計算することができます ただし 赤い球体のように トラッキング可能アンカーにアタッチされた レンダリングコンテンツの場合は トラッキング可能アンカーの位置と デバイスの位置の両方を 使用することになります トラッキング可能なアンカー予測では トラッキング可能なアンカー時間を使用します では サンプルコードを見てみましょう レンダリングループ内で 頭部の姿勢と トラッキング可能アンカーのポーズの取得前に アプリは重要でない作業を 処理する必要があります それによって予測精度が向上します 重要度の低い作業が終わった後 アプリは最適なタイミングで 作業を開始します アンカーに依存する作業の開始時は まず frameTimingのデータから presentationTimeと trackableAnchorTimeの両方を 取得する必要があります

そして それらのタイムスタンプを 秒に変換します

presentationTimeを使用して deviceAnchorをクエリします trackableAnchorTimeを使用して トラッキング可能なアンカーをクエリします トラッキング可能なアンカーが追跡されたら その位置を基準にロジックを実行します ARKit APIの詳細を確認するには 「Create enhanced spatial computing experiences with ARKit」をご覧ください これで visionOSで 素晴らしいmixedイマーシブスタイルを 生み出すために必要なツールが揃いました Compositor Servicesと Metalを使えば レンダリングループを設定して 3Dコンテンツを表示できます そして最後に ARKitを使用して 体験をインタラクティブにすることができます 詳しくは こちらのビデオをご覧ください ご視聴ありがとうございました

-

-

3:07 - Add mixed immersion

@main struct MyApp: App { var body: some Scene { ImmersiveSpace { CompositorLayer(configuration: MyConfiguration()) { layerRenderer in let engine = my_engine_create(layerRenderer) let renderThread = Thread { my_engine_render_loop(engine) } renderThread.name = "Render Thread" renderThread.start() } .immersionStyle(selection: $style, in: .mixed, .full) } } } -

4:43 - Create a renderPassDescriptor

let renderPassDescriptor = MTLRenderPassDescriptor() renderPassDescriptor.colorAttachments[0].texture = drawable.colorTextures[0] renderPassDescriptor.colorAttachments[0].loadAction = .clear renderPassDescriptor.colorAttachments[0].storeAction = .store renderPassDescriptor.colorAttachments[0].clearColor = .init(red: 0.0, green: 0.0, blue: 0.0, alpha: 0.0) renderPassDescriptor.depthAttachment.texture = drawable.depthTextures[0] renderPassDescriptor.depthAttachment.loadAction = .clear renderPassDescriptor.depthAttachment.storeAction = .store renderPassDescriptor.depthAttachment.clearDepth = 0.0 -

9:08 - Set Upper Limb Visibility

@main struct MyApp: App { var body: some Scene { ImmersiveSpace { CompositorLayer(configuration: MyConfiguration()) { layerRenderer in let engine = my_engine_create(layerRenderer) let renderThread = Thread { my_engine_render_loop(engine) } renderThread.name = "Render Thread" renderThread.start() } .immersionStyle(selection: $style, in: .mixed, .full) .upperLimbVisiblity(.automatic) } } } -

13:37 - Compose a projection view matrix

func renderLoop { //... let deviceAnchor = worldTracking.queryDeviceAnchor(atTimestamp: presentationTime) drawable.deviceAnchor = deviceAnchor for viewIndex in 0...drawable.views.count { let view = drawable.views[viewIndex] let originFromDevice = deviceAnchor?.originFromAnchorTransform let deviceFromView = view.transform let viewMatrix = (originFromDevice * deviceFromView).inverse let projection = drawable.computeProjection(normalizedDeviceCoordinatesConvention: .rightUpBack, viewIndex: viewIndex) let projectionViewMatrix = projection * viewMatrix; //... } } -

18:27 - Trackable anchor prediction

func renderFrame() { //... // Get the trackable anchor and presentation time. let presentationTime = drawable.frameTiming.presentationTime let trackableAnchorTime = drawable.frameTiming.trackableAnchorTime // Convert the timestamps into units of seconds let devicePredictionTime = LayerRenderer.Clock.Instant.epoch.duration(to: presentationTime).timeInterval let anchorPredictionTime = LayerRenderer.Clock.Instant.epoch.duration(to: trackableAnchorTime).timeInterval let deviceAnchor = worldTracking.queryDeviceAnchor(atTimestamp: devicePredictionTime) let leftAnchor = handTracking.handAnchors(at: anchorPredictionTime) if (leftAnchor.isTracked) { //... }

-